Hadoop2.x源码-编译剖析

1.概述

最近,有小伙伴涉及到源码编译。然而,在编译期间也是遇到各种坑,在求助于搜索引擎,技术博客,也是难以解决自身所遇到的问题。笔者在被询问多次的情况下,今天打算为大家来写一篇文章来剖析下编译的细节,以及遇到编译问题后,应该如何去解决这样类似的问题。因为,编译的问题,对于后期业务拓展,二次开发,编译打包是一个基本需要面临的问题。

2.编译准备

在编译源码之前,我们需要准备编译所需要的基本环境。下面给大家列举本次编译的基础环境,如下所示:

- 硬件环境

| 操作系统 | CentOS6.6 |

| CPU | I7 |

| 内存 | 16G |

| 硬盘 | 闪存 |

| 核数 | 4核 |

- 软件环境

| JDK | 1.7 |

| Maven | 3.2.3 |

| ANT | 1.9.6 |

| Protobuf | 2.5.0 |

在准备好这些环境之后,我们需要去将这些环境安装到操作系统当中。步骤如下:

2.1 基础环境安装

关于JDK,Maven,ANT的安装较为简单,这里就不多做赘述了,将其对应的压缩包解压,然后在/etc/profile文件当中添加对应的路径到PATH中即可。下面笔者给大家介绍安装Protobuf,其安装需要对Protobuf进行编译,故我们需要编译的依赖环境gcc、gcc-c++、cmake、openssl-devel、ncurses-devel,安装命令如下所示:

yum -y install gcc yum -y install gcc-c++ yum -y install cmake yum -y install openssl-devel yum -y install ncurses-devel

验证GCC是否安装成功,命令如下所示:

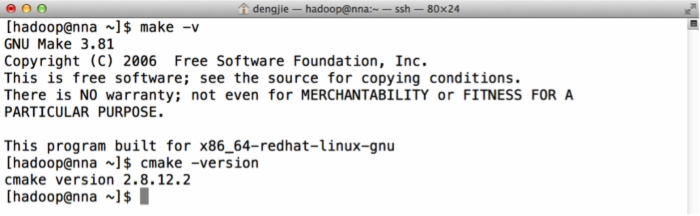

验证Make核CMake是否安装成功,命令如下所示:

在准备完这些环境之后,开始去编译Protobuf,编译命令如下所示:

[hadoop@nna ~]$ cd protobuf-2.5.0/ [hadoop@nna protobuf-2.5.0]$ ./configure --prefix=/usr/local/protoc [hadoop@nna protobuf-2.5.0]$ make [hadoop@nna protobuf-2.5.0]$ make install

PS:这里安装的时候有可能提示全县不足,若出现该类问题,使用sudo进行安装。

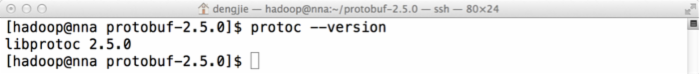

验证Protobuf安装是否成功,命令如下所示:

下面,我们开始进入编译环境,在编译的过程当中会遇到很多问题,大家遇到问题的时候,要认真的去分析这些问题产生的原因,这里先给大家列举一些可以避免的问题,在使用Maven进行编译的时候,若使用默认的JVM参数,在编译到hadoop-hdfs模块的时候,会出现溢出现象。异常信息如下所示:

java.lang.OutOfMemoryError: Java heap space

这里,我们在编译Hadoop源码之前,可以先去环境变量中设置其参数即可,内容修改如下:

export MAVEN_OPTS="-Xms256m -Xmx512m"

接下来,我们进入到Hadoop的源码,这里笔者使用的是Hadoop2.6的源码进行编译,更高版本的源码进行编译,估计会有些许差异,编译命令如下所示:

[hadoop@nna tar]$ cd hadoop-2.6.0-src [hadoop@nna tar]$ mvn package -DskipTests -Pdist,native

PS:这里笔者是直接将其编译为文件夹,若需要编译成tar包,可以在后面加上tar的参数,命令为 mvn package -DskipTests -Pdist,native -Dtar

笔者在编译过程当中,出现过在编译KMS模块时,下载tomcat不完全的问题,Hadoop采用的tomcat是apache-tomcat-6.0.41.tar.gz,若是在此模块下出现异常,可以使用一下命令查看tomcat的文件大小,该文件正常大小为6.9M左右。查看命令如下所示:

[hadoop@nna downloads]$ du -sh *

若出现只有几K的tomcat安装包,表示tomcat下载失败,我们将其手动下载到/home/hadoop/tar/hadoop-2.6.0-src/hadoop-common-project/hadoop-kms/downloads目录下即可。在编译成功后,会出现以下信息:

[INFO] ------------------------------------------------------------------------ [INFO] Reactor Summary: [INFO] [INFO] Apache Hadoop Main ................................. SUCCESS [ 1.162 s] [INFO] Apache Hadoop Project POM .......................... SUCCESS [ 0.690 s] [INFO] Apache Hadoop Annotations .......................... SUCCESS [ 1.589 s] [INFO] Apache Hadoop Assemblies ........................... SUCCESS [ 0.164 s] [INFO] Apache Hadoop Project Dist POM ..................... SUCCESS [ 1.064 s] [INFO] Apache Hadoop Maven Plugins ........................ SUCCESS [ 2.260 s] [INFO] Apache Hadoop MiniKDC .............................. SUCCESS [ 1.492 s] [INFO] Apache Hadoop Auth ................................. SUCCESS [ 2.233 s] [INFO] Apache Hadoop Auth Examples ........................ SUCCESS [ 2.102 s] [INFO] Apache Hadoop Common ............................... SUCCESS [01:00 min] [INFO] Apache Hadoop NFS .................................. SUCCESS [ 3.891 s] [INFO] Apache Hadoop KMS .................................. SUCCESS [ 5.872 s] [INFO] Apache Hadoop Common Project ....................... SUCCESS [ 0.019 s] [INFO] Apache Hadoop HDFS ................................. SUCCESS [04:04 min] [INFO] Apache Hadoop HttpFS ............................... SUCCESS [01:47 min] [INFO] Apache Hadoop HDFS BookKeeper Journal .............. SUCCESS [04:58 min] [INFO] Apache Hadoop HDFS-NFS ............................. SUCCESS [ 2.492 s] [INFO] Apache Hadoop HDFS Project ......................... SUCCESS [ 0.020 s] [INFO] hadoop-yarn ........................................ SUCCESS [ 0.018 s] [INFO] hadoop-yarn-api .................................... SUCCESS [01:05 min] [INFO] hadoop-yarn-common ................................. SUCCESS [01:00 min] [INFO] hadoop-yarn-server ................................. SUCCESS [ 0.029 s] [INFO] hadoop-yarn-server-common .......................... SUCCESS [01:03 min] [INFO] hadoop-yarn-server-nodemanager ..................... SUCCESS [01:10 min] [INFO] hadoop-yarn-server-web-proxy ....................... SUCCESS [ 1.810 s] [INFO] hadoop-yarn-server-applicationhistoryservice ....... SUCCESS [ 4.041 s] [INFO] hadoop-yarn-server-resourcemanager ................. SUCCESS [ 11.739 s] [INFO] hadoop-yarn-server-tests ........................... SUCCESS [ 3.332 s] [INFO] hadoop-yarn-client ................................. SUCCESS [ 4.762 s] [INFO] hadoop-yarn-applications ........................... SUCCESS [ 0.017 s] [INFO] hadoop-yarn-applications-distributedshell .......... SUCCESS [ 1.586 s] [INFO] hadoop-yarn-applications-unmanaged-am-launcher ..... SUCCESS [ 1.233 s] [INFO] hadoop-yarn-site ................................... SUCCESS [ 0.018 s] [INFO] hadoop-yarn-registry ............................... SUCCESS [ 3.270 s] [INFO] hadoop-yarn-project ................................ SUCCESS [ 2.164 s] [INFO] hadoop-mapreduce-client ............................ SUCCESS [ 0.032 s] [INFO] hadoop-mapreduce-client-core ....................... SUCCESS [ 13.047 s] [INFO] hadoop-mapreduce-client-common ..................... SUCCESS [ 10.890 s] [INFO] hadoop-mapreduce-client-shuffle .................... SUCCESS [ 2.534 s] [INFO] hadoop-mapreduce-client-app ........................ SUCCESS [ 6.429 s] [INFO] hadoop-mapreduce-client-hs ......................... SUCCESS [ 4.866 s] [INFO] hadoop-mapreduce-client-jobclient .................. SUCCESS [02:04 min] [INFO] hadoop-mapreduce-client-hs-plugins ................. SUCCESS [ 1.183 s] [INFO] Apache Hadoop MapReduce Examples ................... SUCCESS [ 3.655 s] [INFO] hadoop-mapreduce ................................... SUCCESS [ 1.775 s] [INFO] Apache Hadoop MapReduce Streaming .................. SUCCESS [ 11.478 s] [INFO] Apache Hadoop Distributed Copy ..................... SUCCESS [ 15.399 s] [INFO] Apache Hadoop Archives ............................. SUCCESS [ 1.359 s] [INFO] Apache Hadoop Rumen ................................ SUCCESS [ 3.736 s] [INFO] Apache Hadoop Gridmix .............................. SUCCESS [ 2.822 s] [INFO] Apache Hadoop Data Join ............................ SUCCESS [ 1.791 s] [INFO] Apache Hadoop Ant Tasks ............................ SUCCESS [ 1.350 s] [INFO] Apache Hadoop Extras ............................... SUCCESS [ 1.858 s] [INFO] Apache Hadoop Pipes ................................ SUCCESS [ 5.805 s] [INFO] Apache Hadoop OpenStack support .................... SUCCESS [ 3.061 s] [INFO] Apache Hadoop Amazon Web Services support .......... SUCCESS [07:14 min] [INFO] Apache Hadoop Client ............................... SUCCESS [ 2.986 s] [INFO] Apache Hadoop Mini-Cluster ......................... SUCCESS [ 0.053 s] [INFO] Apache Hadoop Scheduler Load Simulator ............. SUCCESS [ 2.917 s] [INFO] Apache Hadoop Tools Dist ........................... SUCCESS [ 5.702 s] [INFO] Apache Hadoop Tools ................................ SUCCESS [ 0.015 s] [INFO] Apache Hadoop Distribution ......................... SUCCESS [ 8.587 s] [INFO] ------------------------------------------------------------------------ [INFO] BUILD SUCCESS [INFO] ------------------------------------------------------------------------ [INFO] Total time: 28:25 min [INFO] Finished at: 2015-10-22T15:12:10+08:00 [INFO] Final Memory: 89M/451M [INFO] ------------------------------------------------------------------------

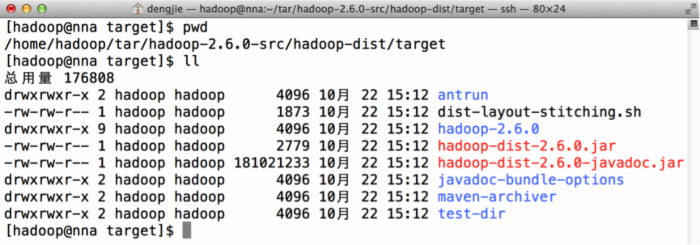

在编译完成之后,会在Hadoop源码的dist目录下生成编译好的文件,如下图所示:

图中hadoop-2.6.0即表示编译好的文件。

3.总结

在编译的过程当中,会出现各种各样的问题,有些问题可以借助搜索引擎去帮助我们解决,有些问题搜索引擎却难以直接的给出解决方案,这时,我们需要冷静的分析编译错误信息,大胆的去猜测,然后去求证我们的想法。简而言之,解决问题的方法是有很多的。当然,大家也可以在把遇到的编译问题,贴在评论下方,供后来者参考或借鉴。

4.结束语

这篇文章就和大家分享到这里,如果大家在研究和学习的过程中有什么疑问,可以加群进行讨论或发送邮件给我,我会尽我所能为您解答,与君共勉!

- 本文标签: App Hadoop build tar 文章 UI apache 开发 pom core HDFS Apache Hadoop 源码 example ACE 安装 java 操作系统 maven src 搜索引擎 CTO 编译 Amazon ip 博客 map 目录 参数 node dist cat OpenStack ssl 总结 shell http apr 软件 tab client nfs API web final tomcat ask centos

- 版权声明: 本文为互联网转载文章,出处已在文章中说明(部分除外)。如果侵权,请联系本站长删除,谢谢。

- 本文海报: 生成海报一 生成海报二

![[HBLOG]公众号](https://www.liuhaihua.cn/img/qrcode_gzh.jpg)