Oracle 12cR1 RAC 在VMware Workstation上安装(上)—OS环境配置

Oracle 12cR1 RAC 在VMware Workstation上安装(上)—OS环境配置

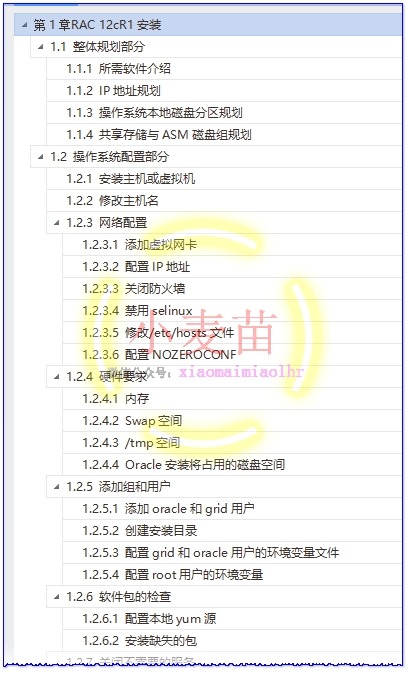

1.1 整体规划部分

1.1.1 所需软件介绍

Oracle RAC不支持异构平台。在同一个集群中,可以支持具有速度和规模不同的机器,但所有节点必须运行在相同的操作系统。Oracle RAC不支持具有不同的芯片架构的机器。

|

序号 |

类型 |

内容 |

|

1 |

数据库 |

p17694377_121020_Linux-x86-64_1of8.zip p17694377_121020_Linux-x86-64_2of8.zip |

|

2 |

集群软件 |

p17694377_121020_Linux-x86-64_3of8.zip p17694377_121020_Linux-x86-64_4of8.zip |

|

3 |

操作系统 |

RHEL 6.5 2.6.32-431.el6.x86_64 硬件兼容性:workstation 9.0 |

|

4 |

虚拟机软件 |

VMware Workstation 12 Pro 12.5.2 build-4638234 |

|

5 |

Xmanager Enterprise 4 |

Xmanager Enterprise 4,用于打开图形界面 |

|

6 |

rlwrap-0.36 |

rlwrap-0.36,用于记录sqlplus、rman等命令的历史记录 |

|

7 |

SecureCRTPortable.exe |

Version 7.0.0 (build 326),带有SecureCRT和SecureFX,用于SSH连接 |

注:这些软件小麦苗已上传到腾讯微云(http://blog.itpub.net/26736162/viewspace-1624453/),各位朋友可以去下载。另外,小麦苗已经将安装好的虚拟机上传到了云盘,里边已集成了rlwrap软件。

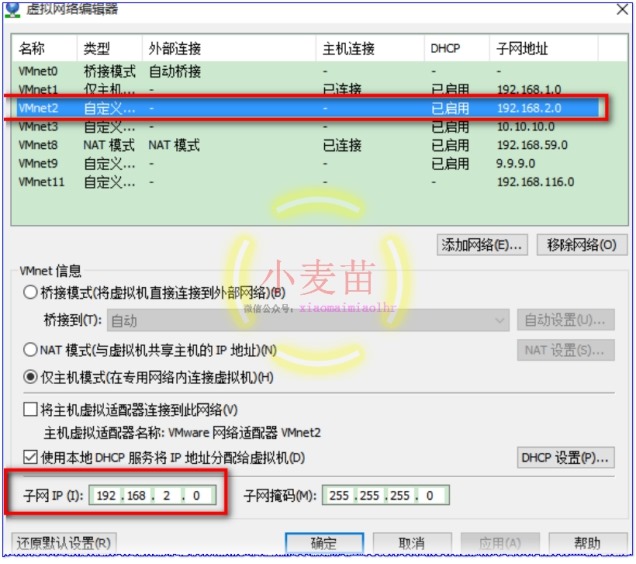

1.1.2 IP地址规划

从Oracle 11g开始,共7个IP地址,2块网卡,其中public、vip和scan都在同一个网段,private在另一个网段,主机名不要包含下横线,如:RAC_01是不允许的;通过执行ifconfig -a检查2个节点的网络设备名字是否一致。另外,在安装之前,公网、私网共4个IP可以ping通,其它3个不能ping通才是正常的。

|

节点/主机名 |

Interface Name |

地址类型 |

IP Address |

注册位置 |

|

raclhr-12cR1-N1 |

raclhr-12cR1-N1 |

Public |

192.168.59.160 |

/etc/hosts |

|

raclhr-12cR1-N1 |

raclhr-12cR1-N1-vip |

Virtual |

192.168.59.162 |

/etc/hosts |

|

raclhr-12cR1-N1 |

raclhr-12cR1-N1-priv |

Private |

192.168.2.100 |

/etc/hosts |

|

raclhr-12cR1-N2 |

raclhr-12cR1-N2 |

Public |

192.168.59.161 |

/etc/hosts |

|

raclhr-12cR1-N2 |

raclhr-12cR1-N2-vip |

Virtual |

192.168.59.163 |

/etc/hosts |

|

raclhr-12cR1-N2 |

raclhr-12cR1-N2-priv |

Private |

192.168.2.101 |

/etc/hosts |

|

|

raclhr-12cR1-scan |

SCAN |

192.168.59.164 |

/etc/hosts |

1.1.3 操作系统本地磁盘分区规划

除了/boot分区外,其它分区均采用逻辑卷的方式,这样有利于文件系统的扩展。

|

序号 |

分区名称 |

大小 |

逻辑卷 |

用途说明 |

|

1 |

/boot |

200MB |

/dev/sda1 |

引导分区 |

|

2 |

/ |

10G |

/dev/mapper/vg_rootlhr-Vol00 |

根分区 |

|

3 |

swap |

2G |

/dev/mapper/vg_rootlhr-Vol02 |

交换分区 |

|

4 |

/tmp |

3G |

/dev/mapper/vg_rootlhr-Vol01 |

临时空间 |

|

5 |

/home |

3G |

/dev/mapper/vg_rootlhr-Vol03 |

所有用户的home目录 |

|

6 |

/u01 |

20G |

/dev/mapper/vg_orasoft-lv_orasoft_u01 |

oracle和grid的安装目录 |

|

[root@raclhr-12cR1-N1 ~]# fdisk -l | grep dev Disk /dev/sda: 21.5 GB, 21474836480 bytes /dev/sda1 * 1 26 204800 83 Linux /dev/sda2 26 1332 10485760 8e Linux LVM /dev/sda3 1332 2611 10279936 8e Linux LVM Disk /dev/sdb: 107.4 GB, 107374182400 bytes /dev/sdb1 1 1306 10485760 8e Linux LVM /dev/sdb2 1306 2611 10485760 8e Linux LVM /dev/sdb3 2611 3917 10485760 8e Linux LVM /dev/sdb4 3917 13055 73399296 5 Extended /dev/sdb5 3917 5222 10485760 8e Linux LVM /dev/sdb6 5223 6528 10485760 8e Linux LVM /dev/sdb7 6528 7834 10485760 8e Linux LVM /dev/sdb8 7834 9139 10485760 8e Linux LVM /dev/sdb9 9139 10445 10485760 8e Linux LVM /dev/sdb10 10445 11750 10485760 8e Linux LVM /dev/sdb11 11750 13055 10477568 8e Linux LVM

|

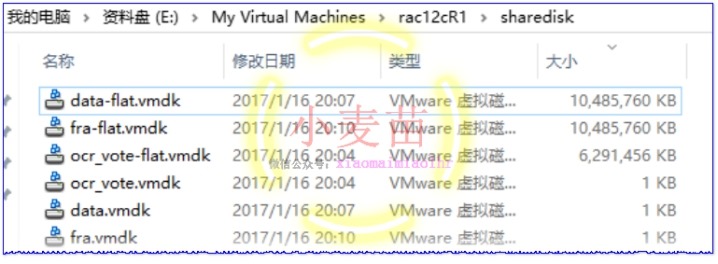

1.1.4 共享存储与ASM磁盘组规划

|

序号 |

磁盘名称 |

ASM磁盘名称 |

磁盘组名称 |

大小 |

用途 |

|

1 |

sdc1 |

asm-diskc |

OCR |

6G |

OCR+VOTINGDISK |

|

2 |

sdd1 |

asm_diskd |

DATA |

10G |

data |

|

3 |

sde1 |

asm_diske |

FRA |

10G |

快速恢复区 |

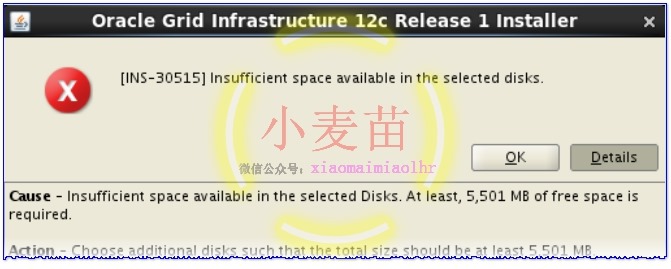

注意,12c R1的OCR磁盘组最少需要5501MB磁盘空间。

1.2 操作系统配置部分

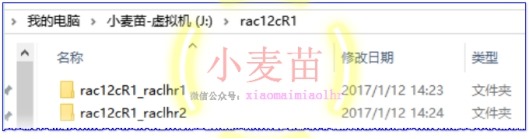

1.2.1 安装主机或虚拟机

安装步骤略。安装一台虚拟机,然后复制改名,如下:

也可以下载小麦苗已经安装好的虚拟机环境。

1.2.2 修改主机名

修改2个节点的主机名为raclhr-12cR1-N1和raclhr-12cR1-N2:

|

vi /etc/sysconfig/network HOSTNAME=raclhr-12cR1-N1 hostname raclhr-12cR1-N1

|

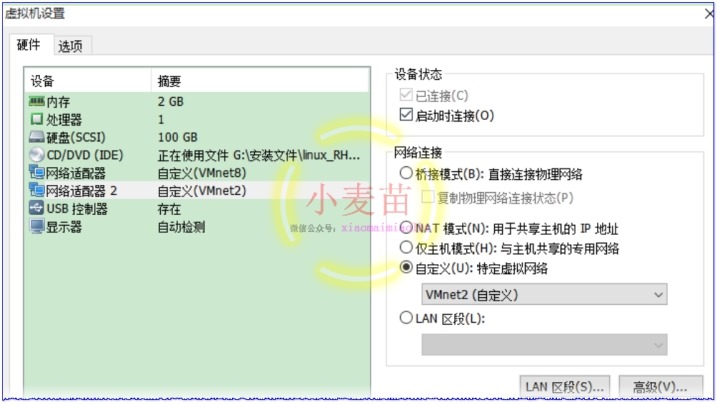

1.2.3 网络配置

1.2.3.1 添加虚拟网卡

添加2块网卡,VMnet8为公有网卡,VMnet2位私有网卡,如下所示:

1.2.3.2 配置IP地址

|

chkconfig NetworkManager off chkconfig network on service NetworkManager stop service network start |

在2个节点上分别执行如下的操作,在节点2上配置IP的时候注意将IP地址修改掉。

第一步,配置公网和私网的IP地址:

配置公网:vi /etc/sysconfig/network-scripts/ifcfg-eth0

|

DEVICE=eth0 IPADDR=192.168.59.160 NETMASK=255.255.255.0 NETWORK=192.168.59.0 BROADCAST=192.168.59.255 GATEWAY=192.168.59.2 ONBOOT=yes USERCTL=no BOOTPROTO=static TYPE=Ethernet IPV6INIT=no |

配置私网:vi /etc/sysconfig/network-scripts/ifcfg-eth1

|

DEVICE=eth1 IPADDR=192.168.2.100 NETMASK=255.255.255.0 NETWORK=192.168.2.0 BROADCAST=192.168.2.255 GATEWAY=192.168.2.1 ONBOOT=yes USERCTL=no BOOTPROTO=static TYPE=Ethernet IPV6INIT=no |

第二步,将/etc/udev/rules.d/70-persistent-net.rules中的内容清空,

第三步,重启主机。

|

[root@raclhr-12cR1-N1 ~]# ip a 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UNKNOWN qlen 1000 link/ether 00:0c:29:d9:43:a7 brd ff:ff:ff:ff:ff:ff inet 192.168.59.160/24 brd 192.168.59.255 scope global eth0 inet6 fe80::20c:29ff:fed9:43a7/64 scope link valid_lft forever preferred_lft forever 3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UNKNOWN qlen 1000 link/ether 00:0c:29:d9:43:b1 brd ff:ff:ff:ff:ff:ff inet 192.168.2.100/24 brd 192.168.2.255 scope global eth1 inet6 fe80::20c:29ff:fed9:43b1/64 scope link valid_lft forever preferred_lft forever 4: virbr0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UNKNOWN link/ether 52:54:00:68:da:bb brd ff:ff:ff:ff:ff:ff inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0 5: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN qlen 500 link/ether 52:54:00:68:da:bb brd ff:ff:ff:ff:ff:ff

|

1.2.3.3 关闭防火墙

在2个节点上分别执行如下语句:

|

service iptables stop service ip6tables stop chkconfig iptables off chkconfig ip6tables off

chkconfig iptables --list |

chkconfig iptables off ---永久

service iptables stop ---临时

/etc/init.d/iptables status ----会得到一系列信息,说明防火墙开着。

/etc/rc.d/init.d/iptables stop ----------关闭防火墙

|

LANG=en_US setup ----------图形界面 |

1.2.3.4 禁用selinux

修改/etc/selinux/config

编辑文本中的SELINUX=enforcing为SELINUX=disabled

|

[root@raclhr-12cR1-N1 ~]# more /etc/selinux/config

# This file controls the state of SELinux on the system. # SELINUX= can take one of these three values: # enforcing - SELinux security policy is enforced. # permissive - SELinux prints warnings instead of enforcing. # disabled - No SELinux policy is loaded. SELINUX=disabled # SELINUXTYPE= can take one of these two values: # targeted - Targeted processes are protected, # mls - Multi Level Security protection. SELINUXTYPE=targeted [root@raclhr-12cR1-N1 ~]# 临时关闭(不用重启机器): setenforce 0

|

查看SELinux状态:

1、/usr/sbin/sestatus -v ##如果SELinux status参数为enabled即为开启状态

SELinux status: enabled

2、getenforce ##也可以用这个命令检查

|

[root@raclhr-12cR1-N1 ~] /usr/sbin/sestatus -v SELinux status: disabled [root@raclhr-12cR1-N1 ~] getenforce Disabled [root@raclhr-12cR1-N1 ~]

|

1.2.3.5 修改/etc/hosts文件

2个节点均配置相同,如下:

|

[root@raclhr-12cR1-N2 ~]# more /etc/hosts # Do not remove the following line, or various programs # that require network functionality will fail. 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

#Public IP 192.168.59.160 raclhr-12cR1-N1 192.168.59.161 raclhr-12cR1-N2

#Private IP 192.168.2.100 raclhr-12cR1-N1-priv 192.168.2.101 raclhr-12cR1-N2-priv

#Virtual IP 192.168.59.162 raclhr-12cR1-N1-vip 192.168.59.163 raclhr-12cR1-N2-vip

#Scan IP 192.168.59.164 raclhr-12cR1-scan [root@raclhr-12cR1-N2 ~]# [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N1 PING raclhr-12cR1-N1 (192.168.59.160) 56(84) bytes of data. 64 bytes from raclhr-12cR1-N1 (192.168.59.160): icmp_seq=1 ttl=64 time=0.018 ms 64 bytes from raclhr-12cR1-N1 (192.168.59.160): icmp_seq=2 ttl=64 time=0.052 ms ^C --- raclhr-12cR1-N1 ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1573ms rtt min/avg/max/mdev = 0.018/0.035/0.052/0.017 ms [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N2 PING raclhr-12cR1-N2 (192.168.59.161) 56(84) bytes of data. 64 bytes from raclhr-12cR1-N2 (192.168.59.161): icmp_seq=1 ttl=64 time=1.07 ms 64 bytes from raclhr-12cR1-N2 (192.168.59.161): icmp_seq=2 ttl=64 time=0.674 ms ^C --- raclhr-12cR1-N2 ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1543ms rtt min/avg/max/mdev = 0.674/0.876/1.079/0.204 ms [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N1-priv PING raclhr-12cR1-N1-priv (192.168.2.100) 56(84) bytes of data. 64 bytes from raclhr-12cR1-N1-priv (192.168.2.100): icmp_seq=1 ttl=64 time=0.015 ms 64 bytes from raclhr-12cR1-N1-priv (192.168.2.100): icmp_seq=2 ttl=64 time=0.056 ms ^C --- raclhr-12cR1-N1-priv ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1297ms rtt min/avg/max/mdev = 0.015/0.035/0.056/0.021 ms [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N2-priv PING raclhr-12cR1-N2-priv (192.168.2.101) 56(84) bytes of data. 64 bytes from raclhr-12cR1-N2-priv (192.168.2.101): icmp_seq=1 ttl=64 time=1.10 ms 64 bytes from raclhr-12cR1-N2-priv (192.168.2.101): icmp_seq=2 ttl=64 time=0.364 ms ^C --- raclhr-12cR1-N2-priv ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1421ms rtt min/avg/max/mdev = 0.364/0.733/1.102/0.369 ms [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N1-vip PING raclhr-12cR1-N1-vip (192.168.59.162) 56(84) bytes of data. From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=2 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=3 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=4 Destination Host Unreachable ^C --- raclhr-12cR1-N1-vip ping statistics --- 4 packets transmitted, 0 received, +3 errors, 100% packet loss, time 3901ms pipe 3 [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N2-vip PING raclhr-12cR1-N2-vip (192.168.59.163) 56(84) bytes of data. From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=1 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=2 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=3 Destination Host Unreachable ^C --- raclhr-12cR1-N2-vip ping statistics --- 5 packets transmitted, 0 received, +3 errors, 100% packet loss, time 4026ms pipe 3 [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-scan PING raclhr-12cR1-scan (192.168.59.164) 56(84) bytes of data. From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=2 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=3 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=4 Destination Host Unreachable ^C --- raclhr-12cR1-scan ping statistics --- 5 packets transmitted, 0 received, +3 errors, 100% packet loss, time 4501ms pipe 3 [root@raclhr-12cR1-N1 ~]#

|

1.2.3.6 配置NOZEROCONF

vi /etc/sysconfig/network增加以下内容

|

NOZEROCONF=yes |

1.2.4 硬件要求

1.2.4.1 内存

使用命令查看:# grep MemTotal /proc/meminfo

|

[root@raclhr-12cR1-N1 ~]# grep MemTotal /proc/meminfo MemTotal: 2046592 kB [root@raclhr-12cR1-N1 ~]# |

1.2.4.2 Swap空间

|

RAM |

Swap 空间 |

|

1 GB ~ 2 GB |

1.5倍RAM大小 |

|

2 GB ~ 16 GB |

RAM大小 |

|

> 32 GB |

16 GB |

使用命令查看:# grep SwapTotal /proc/meminfo

|

[root@raclhr-12cR1-N1 ~]# grep SwapTotal /proc/meminfo SwapTotal: 2097144 kB [root@raclhr-12cR1-N1 ~]# |

1.2.4.3 /tmp空间

建议单独建立/tmp文件系统,小麦苗这里用的是逻辑卷,所以比较好扩展。

|

[root@raclhr-12cR1-N1 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/vg_rootlhr-Vol00 9.9G 4.9G 4.6G 52% / tmpfs 1000M 72K 1000M 1% /dev/shm /dev/sda1 194M 35M 150M 19% /boot /dev/mapper/vg_rootlhr-Vol01 3.0G 70M 2.8G 3% /tmp /dev/mapper/vg_rootlhr-Vol03 3.0G 69M 2.8G 3% /home .host:/ 331G 229G 102G 70% /mnt/hgfs |

1.2.4.4 Oracle安装将占用的磁盘空间

本地磁盘:/u01作为下列软件的安装位置

ü Oracle Grid Infrastructure software: 6.8GB

ü Oracle Enterprise Edition software: 5.3GB

|

vgcreate vg_orasoft /dev/sdb1 /dev/sdb2 /dev/sdb3 lvcreate -n lv_orasoft_u01 -L 20G vg_orasoft mkfs.ext4 /dev/vg_orasoft/lv_orasoft_u01 mkdir /u01 mount /dev/vg_orasoft/lv_orasoft_u01 /u01

cp /etc/fstab /etc/fstab.`date +%Y%m%d` echo "/dev/vg_orasoft/lv_orasoft_u01 /u01 ext4 defaults 0 0" >> /etc/fstab cat /etc/fstab

|

|

[root@raclhr-12cR1-N2 ~]# vgcreate vg_orasoft /dev/sdb1 /dev/sdb2 /dev/sdb3 Volume group "vg_orasoft" successfully created [root@raclhr-12cR1-N2 ~]# lvcreate -n lv_orasoft_u01 -L 20G vg_orasoft Logical volume "lv_orasoft_u01" created [root@raclhr-12cR1-N2 ~]# mkfs.ext4 /dev/vg_orasoft/lv_orasoft_u01 mke2fs 1.41.12 (17-May-2010) Filesystem label= OS type: Linux Block size=4096 (log=2) Fragment size=4096 (log=2) Stride=0 blocks, Stripe width=0 blocks 1310720 inodes, 5242880 blocks 262144 blocks (5.00%) reserved for the super user First data block=0 Maximum filesystem blocks=4294967296 160 block groups 32768 blocks per group, 32768 fragments per group 8192 inodes per group Superblock backups stored on blocks: 32768, 98304, 163840, 229376, 294912, 819200, 884736, 1605632, 2654208, 4096000

Writing inode tables: done Creating journal (32768 blocks): done Writing superblocks and filesystem accounting information: done

This filesystem will be automatically checked every 39 mounts or 180 days, whichever comes first. Use tune2fs -c or -i to override. [root@raclhr-12cR1-N2 ~]# mkdir /u01 mount /dev/vg_orasoft/lv_orasoft_u01 /u01 [root@raclhr-12cR1-N2 ~]# mount /dev/vg_orasoft/lv_orasoft_u01 /u01 [root@raclhr-12cR1-N2 ~]# cp /etc/fstab /etc/fstab.`date +%Y%m%d` echo "/dev/vg_orasoft/lv_orasoft_u01 /u01 ext4 defaults 0 0" >> /etc/fstab [root@raclhr-12cR1-N2 ~]# echo "/dev/vg_orasoft/lv_orasoft_u01 /u01 ext4 defaults 0 0" >> /etc/fstab [root@raclhr-12cR1-N2 ~]# cat /etc/fstab # # /etc/fstab # Created by anaconda on Sat Jan 14 18:56:24 2017 # # Accessible filesystems, by reference, are maintained under '/dev/disk' # See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info # /dev/mapper/vg_rootlhr-Vol00 / ext4 defaults 1 1 UUID=fccf51c1-2d2f-4152-baac-99ead8cfbc1a /boot ext4 defaults 1 2 /dev/mapper/vg_rootlhr-Vol01 /tmp ext4 defaults 1 2 /dev/mapper/vg_rootlhr-Vol02 swap swap defaults 0 0 tmpfs /dev/shm tmpfs defaults 0 0 devpts /dev/pts devpts gid=5,mode=620 0 0 sysfs /sys sysfs defaults 0 0 proc /proc proc defaults 0 0 /dev/vg_rootlhr/Vol03 /home ext4 defaults 0 0 /dev/vg_orasoft/lv_orasoft_u01 /u01 ext4 defaults 0 0 [root@raclhr-12cR1-N2 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/vg_rootlhr-Vol00 9.9G 4.9G 4.6G 52% / tmpfs 1000M 72K 1000M 1% /dev/shm /dev/sda1 194M 35M 150M 19% /boot /dev/mapper/vg_rootlhr-Vol01 3.0G 70M 2.8G 3% /tmp /dev/mapper/vg_rootlhr-Vol03 3.0G 69M 2.8G 3% /home .host:/ 331G 234G 97G 71% /mnt/hgfs /dev/mapper/vg_orasoft-lv_orasoft_u01 20G 172M 19G 1% /u01 [root@raclhr-12cR1-N2 ~]# vgs VG #PV #LV #SN Attr VSize VFree vg_orasoft 3 1 0 wz--n- 29.99g 9.99g vg_rootlhr 2 4 0 wz--n- 19.80g 1.80g [root@raclhr-12cR1-N2 ~]# lvs LV VG Attr LSize Pool Origin Data% Move Log Cpy%Sync Convert lv_orasoft_u01 vg_orasoft -wi-ao---- 20.00g Vol00 vg_rootlhr -wi-ao---- 10.00g Vol01 vg_rootlhr -wi-ao---- 3.00g Vol02 vg_rootlhr -wi-ao---- 2.00g Vol03 vg_rootlhr -wi-ao---- 3.00g [root@raclhr-12cR1-N2 ~]# pvs PV VG Fmt Attr PSize PFree /dev/sda2 vg_rootlhr lvm2 a-- 10.00g 0 /dev/sda3 vg_rootlhr lvm2 a-- 9.80g 1.80g /dev/sdb1 vg_orasoft lvm2 a-- 10.00g 0 /dev/sdb10 lvm2 a-- 10.00g 10.00g /dev/sdb11 lvm2 a-- 9.99g 9.99g /dev/sdb2 vg_orasoft lvm2 a-- 10.00g 0 /dev/sdb3 vg_orasoft lvm2 a-- 10.00g 9.99g /dev/sdb5 lvm2 a-- 10.00g 10.00g /dev/sdb6 lvm2 a-- 10.00g 10.00g /dev/sdb7 lvm2 a-- 10.00g 10.00g /dev/sdb8 lvm2 a-- 10.00g 10.00g /dev/sdb9 lvm2 a-- 10.00g 10.00g [root@raclhr-12cR1-N2 ~]#

|

1.2.5 添加组和用户

1.2.5.1 添加oracle和grid用户

从Oracle 11gR2开始,安装RAC需要安装 Oracle Grid Infrastructure 软件、Oracle数据库软件,其中Grid软件等同于Oracle 10g的Clusterware集群件。Oracle建议以不同的用户分别安装Grid Infrastructure软件、Oracle数据库软件。一般以grid用户安装Grid Infrastructure,oracle用户安装Oracle数据库软件。grid、oracle用户需要属于不同的用户组。在配置RAC时,还要求这两个用户在RAC的不同节点上uid、gid要一致。

ü 创建5个组dba,oinstall分别做为OSDBA组,Oracle Inventory组;asmdba,asmoper,asmadmin作为ASM磁盘管理组。

ü 创建2个用户oracle, grid,oracle属于dba,oinstall,oraoper,asmdba组,grid属于asmadmin,asmdba,asmoper,oraoper,dba;oinstall做为用户的primary group。

ü 上述创建的所有用户和组在每台机器上的名称和对应ID号,口令,以及属组关系和顺序必须保持一致。grid和oracle密码不过期。

创建组:

|

groupadd -g 1000 oinstall groupadd -g 1001 dba groupadd -g 1002 oper groupadd -g 1003 asmadmin groupadd -g 1004 asmdba groupadd -g 1005 asmoper

|

创建grid和oracle用户:

|

useradd -u 1000 -g oinstall -G asmadmin,asmdba,asmoper,dba -d /home/grid -m grid useradd -u 1001 -g oinstall -G dba,asmdba -d /home/oracle -m oracle |

如果oracle用户已经存在,则:

|

usermod -g oinstall -G dba,asmdba –u 1001 oracle |

为oracle和grid用户设密码:

|

passwd oracle passwd grid |

设置密码永不过期:

|

chage -M -1 oracle chage -M -1 grid chage -l oracle chage -l grid |

检查:

|

[root@raclhr-12cR1-N1 ~]# id grid uid=1000(grid) gid=1000(oinstall) groups=1000(oinstall),1001(dba),1003(asmadmin),1004(asmdba),1005(asmoper) [root@raclhr-12cR1-N1 ~]# id oracle uid=1001(oracle) gid=1000(oinstall) groups=1000(oinstall),1001(dba),1004(asmdba) [root@raclhr-12cR1-N1 ~]# |

1.2.5.2 创建安装目录

? GRID 软件的 ORACLE_HOME 不能是 ORACLE_BASE 的子目录

--在2个节点均创建,root用户下创建目录:

|

mkdir -p /u01/app/oracle mkdir -p /u01/app/grid mkdir -p /u01/app/12.1.0/grid mkdir -p /u01/app/oracle/product/12.1.0/dbhome_1 chown -R grid:oinstall /u01/app/grid chown -R grid:oinstall /u01/app/12.1.0 chown -R oracle:oinstall /u01/app/oracle chmod -R 775 /u01

mkdir -p /u01/app/oraInventory chown -R grid:oinstall /u01/app/oraInventory chmod -R 775 /u01/app/oraInventory |

1.2.5.3 配置grid和oracle用户的环境变量文件

修改gird、oracle用户的.bash_profile文件,以oracle账号登陆,编辑.bash_profile

或者在root直接编辑:

vi /home/oracle/.bash_profile

vi /home/grid/.bash_profile

Oracle用户:

|

umask 022 export ORACLE_SID=lhrrac1 export ORACLE_BASE=/u01/app/oracle export ORACLE_HOME=$ORACLE_BASE/product/12.1.0/dbhome_1 export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib export NLS_DATE_FORMAT="YYYY-MM-DD HH24:MI:SS" export TMP=/tmp export TMPDIR=$TMP export PATH=$ORACLE_HOME/bin:$ORACLE_HOME/OPatch:$PATH

export EDITOR=vi export TNS_ADMIN=$ORACLE_HOME/network/admin export ORACLE_PATH=.:$ORACLE_BASE/dba_scripts/sql:$ORACLE_HOME/rdbms/admin export SQLPATH=$ORACLE_HOME/sqlplus/admin

#export NLS_LANG="SIMPLIFIED CHINESE_CHINA.ZHS16GBK" --AL32UTF8 SELECT userenv('LANGUAGE') db_NLS_LANG FROM DUAL; export NLS_LANG="AMERICAN_CHINA.ZHS16GBK"

alias sqlplus='rlwrap sqlplus' alias rman='rlwrap rman' alias asmcmd='rlwrap asmcmd'

|

grid用户:

|

umask 022 export ORACLE_SID=+ASM1 export ORACLE_BASE=/u01/app/grid export ORACLE_HOME=/u01/app/12.1.0/grid export LD_LIBRARY_PATH=$ORACLE_HOME/lib export NLS_DATE_FORMAT="YYYY-MM-DD HH24:MI:SS" export PATH=$ORACLE_HOME/bin:$PATH alias sqlplus='rlwrap sqlplus' alias asmcmd='rlwrap asmcmd'

|

注意:另外一台数据库实例名须做相应修改:

Oracle:export ORACLE_SID=lhrrac2

grid:export ORACLE_SID=+ASM2

1.2.5.4 配置root用户的环境变量

vi /etc/profile

|

export ORACLE_HOME=/u01/app/12.1.0/grid export PATH=$PATH:$ORACLE_HOME/bin |

1.2.6 软件包的检查

对于Oracle Linux 6和Red Hat Enterprise Linux 6需要安装以下的包,其它版本或OS请参考官方文档(Database Installation Guide)

The following packages (or later versions) must be installed:

|

binutils-2.20.51.0.2-5.11.el6 (x86_64) compat-libcap1-1.10-1 (x86_64) compat-libstdc++-33-3.2.3-69.el6 (x86_64) compat-libstdc++-33-3.2.3-69.el6 (i686) gcc-4.4.4-13.el6 (x86_64) gcc-c++-4.4.4-13.el6 (x86_64) glibc-2.12-1.7.el6 (i686) glibc-2.12-1.7.el6 (x86_64) glibc-devel-2.12-1.7.el6 (x86_64) glibc-devel-2.12-1.7.el6 (i686) ksh libgcc-4.4.4-13.el6 (i686) libgcc-4.4.4-13.el6 (x86_64) libstdc++-4.4.4-13.el6 (x86_64) libstdc++-4.4.4-13.el6 (i686) libstdc++-devel-4.4.4-13.el6 (x86_64) libstdc++-devel-4.4.4-13.el6 (i686) libaio-0.3.107-10.el6 (x86_64) libaio-0.3.107-10.el6 (i686) libaio-devel-0.3.107-10.el6 (x86_64) libaio-devel-0.3.107-10.el6 (i686) libXext-1.1 (x86_64) libXext-1.1 (i686) libXtst-1.0.99.2 (x86_64) libXtst-1.0.99.2 (i686) libX11-1.3 (x86_64) libX11-1.3 (i686) libXau-1.0.5 (x86_64) libXau-1.0.5 (i686) libxcb-1.5 (x86_64) libxcb-1.5 (i686) libXi-1.3 (x86_64) libXi-1.3 (i686) make-3.81-19.el6 sysstat-9.0.4-11.el6 (x86_64) |

检查命令:

|

rpm -q --qf '%{NAME}-%{VERSION}-%{RELEASE} (%{ARCH})/n' binutils / compat-libcap1 / compat-libstdc++ / gcc / gcc-c++ / glibc / glibc-devel / ksh / libgcc / libstdc++ / libstdc++-devel / libaio / libaio-devel / libXext / libXtst / libX11 / libXau / libxcb / libXi / make / sysstat |

执行检查:

|

[root@raclhr-12cR1-N1 ~]# rpm -q --qf '%{NAME}-%{VERSION}-%{RELEASE} (%{ARCH})/n' binutils / > compat-libcap1 / > compat-libstdc++ / > gcc / > gcc-c++ / > glibc / > glibc-devel / > ksh / > libgcc / > libstdc++ / > libstdc++-devel / > libaio / > libaio-devel / > libXext / > libXtst / > libX11 / > libXau / > libxcb / > libXi / > make / > sysstat binutils-2.20.51.0.2-5.36.el6 (x86_64) compat-libcap1-1.10-1 (x86_64) package compat-libstdc++ is not installed gcc-4.4.7-4.el6 (x86_64) gcc-c++-4.4.7-4.el6 (x86_64) glibc-2.12-1.132.el6 (x86_64) glibc-2.12-1.132.el6 (i686) glibc-devel-2.12-1.132.el6 (x86_64) package ksh is not installed libgcc-4.4.7-4.el6 (x86_64) libgcc-4.4.7-4.el6 (i686) libstdc++-4.4.7-4.el6 (x86_64) libstdc++-devel-4.4.7-4.el6 (x86_64) libaio-0.3.107-10.el6 (x86_64) package libaio-devel is not installed libXext-1.3.1-2.el6 (x86_64) libXtst-1.2.1-2.el6 (x86_64) libX11-1.5.0-4.el6 (x86_64) libXau-1.0.6-4.el6 (x86_64) libxcb-1.8.1-1.el6 (x86_64) libXi-1.6.1-3.el6 (x86_64) make-3.81-20.el6 (x86_64) sysstat-9.0.4-22.el6 (x86_64) |

1.2.6.1 配置本地yum源

|

[root@raclhr-12cR1-N1 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/vg_rootlhr-Vol00 9.9G 4.9G 4.5G 52% / tmpfs 1000M 72K 1000M 1% /dev/shm /dev/sda1 194M 35M 150M 19% /boot /dev/mapper/vg_rootlhr-Vol01 3.0G 70M 2.8G 3% /tmp /dev/mapper/vg_rootlhr-Vol03 3.0G 69M 2.8G 3% /home .host:/ 331G 234G 97G 71% /mnt/hgfs /dev/mapper/vg_orasoft-lv_orasoft_u01 20G 172M 19G 1% /u01 |

|

[root@raclhr-12cR1-N1 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/vg_rootlhr-Vol00 9.9G 4.9G 4.5G 52% / tmpfs 1000M 76K 1000M 1% /dev/shm /dev/sda1 194M 35M 150M 19% /boot /dev/mapper/vg_rootlhr-Vol01 3.0G 70M 2.8G 3% /tmp /dev/mapper/vg_rootlhr-Vol03 3.0G 69M 2.8G 3% /home .host:/ 331G 234G 97G 71% /mnt/hgfs /dev/mapper/vg_orasoft-lv_orasoft_u01 20G 172M 19G 1% /u01 /dev/sr0 3.6G 3.6G 0 100% /media/RHEL_6.5 x86_64 Disc 1 [root@raclhr-12cR1-N1 ~]# mkdir -p /media/lhr/cdrom [root@raclhr-12cR1-N1 ~]# mount /dev/sr0 /media/lhr/cdrom/ mount: block device /dev/sr0 is write-protected, mounting read-only [root@raclhr-12cR1-N1 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/vg_rootlhr-Vol00 9.9G 4.9G 4.5G 52% / tmpfs 1000M 76K 1000M 1% /dev/shm /dev/sda1 194M 35M 150M 19% /boot /dev/mapper/vg_rootlhr-Vol01 3.0G 70M 2.8G 3% /tmp /dev/mapper/vg_rootlhr-Vol03 3.0G 69M 2.8G 3% /home .host:/ 331G 234G 97G 71% /mnt/hgfs /dev/mapper/vg_orasoft-lv_orasoft_u01 20G 172M 19G 1% /u01 /dev/sr0 3.6G 3.6G 0 100% /media/RHEL_6.5 x86_64 Disc 1 /dev/sr0 3.6G 3.6G 0 100% /media/lhr/cdrom [root@raclhr-12cR1-N1 ~]# cd /etc/yum.repos.d/ [root@raclhr-12cR1-N1 yum.repos.d]# cp rhel-media.repo rhel-media.repo.bk [root@raclhr-12cR1-N1 yum.repos.d]# more rhel-media.repo [rhel-media] name=Red Hat Enterprise Linux 6.5 baseurl=file:///media/cdrom enabled=1 gpgcheck=1 gpgkey=file:///media/cdrom/RPM-GPG-KEY-redhat-release [root@raclhr-12cR1-N1 yum.repos.d]#

|

配置本地yum源,也可以将整个光盘的内容拷贝到本地里,然后如下配置:

|

rpm -ivh deltarpm-3.5-0.5.20090913git.el6.x86_64.rpm rpm -ivh python-deltarpm-3.5-0.5.20090913git.el6.x86_64.rpm rpm -ivh createrepo-0.9.9-18.el6.noarch.rpm createrepo .

|

小麦苗直接使用了光盘,并没有配置这个。

1.2.6.2 安装缺失的包

|

yum install compat-libstdc++* yum install libaio-devel* yum install ksh* |

最后重新检查,确保所有的包已安装。需要注意的是,有的时候由于版本的问题导致检查有问题,所以需要用rpm -qa | grep libstdc 来检查。

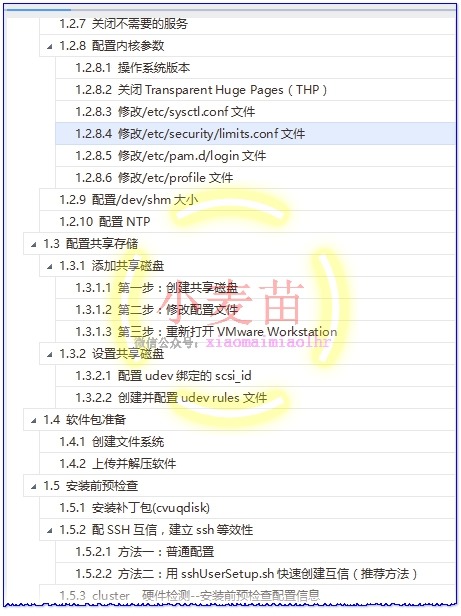

1.2.7 关闭不需要的服务

|

chkconfig autofs off chkconfig acpid off chkconfig sendmail off chkconfig cups-config-daemon off chkconfig cpus off chkconfig xfs off chkconfig lm_sensors off chkconfig gpm off chkconfig openibd off chkconfig pcmcia off chkconfig cpuspeed off chkconfig nfslock off chkconfig ip6tables off chkconfig rpcidmapd off chkconfig apmd off chkconfig sendmail off chkconfig arptables_jf off chkconfig microcode_ctl off chkconfig rpcgssd off chkconfig ntpd off |

1.2.8 配置内核参数

1.2.8.1 操作系统版本

/usr/bin/lsb_release -a

|

[root@raclhr-12cR1-N1 ~]# lsb_release -a LSB Version: :base-4.0-amd64:base-4.0-noarch:core-4.0-amd64:core-4.0-noarch:graphics-4.0-amd64:graphics-4.0-noarch:printing-4.0-amd64:printing-4.0-noarch Distributor ID: RedHatEnterpriseServer Description: Red Hat Enterprise Linux Server release 6.5 (Santiago) Release: 6.5 Codename: Santiago [root@raclhr-12cR1-N1 ~]# uname -a Linux raclhr-12cR1-N1 2.6.32-431.el6.x86_64 #1 SMP Sun Nov 10 22:19:54 EST 2013 x86_64 x86_64 x86_64 GNU/Linux [root@raclhr-12cR1-N1 ~]# [root@raclhr-12cR1-N1 ~]# cat /proc/version Linux version 2.6.32-431.el6.x86_64 (mockbuild@x86-023.build.eng.bos.redhat.com) (gcc version 4.4.7 20120313 (Red Hat 4.4.7-4) (GCC) ) #1 SMP Sun Nov 10 22:19:54 EST 2013 [root@raclhr-12cR1-N1 ~]# |

1.2.8.2 关闭Transparent Huge Pages(THP)

(1) 查看验证transparent_hugepage的状态

|

cat /sys/kernel/mm/redhat_transparent_hugepage/enabled |

always madvise [never] 结果为never表示关闭

(2) 关闭transparent_hugepage的配置

#vi /etc/rc.local #注释:编辑rc.local文件,增加以下内容

|

if test -f /sys/kernel/mm/redhat_transparent_hugepage/enabled; then echo never > /sys/kernel/mm/redhat_transparent_hugepage/enabled fi |

1.2.8.3 修改/etc/sysctl.conf文件

增加以下内容

vi /etc/sysctl.conf

|

# for oracle fs.aio-max-nr = 1048576 fs.file-max = 6815744 kernel.shmall = 2097152 kernel.shmmax = 1054472192 kernel.shmmni = 4096 kernel.sem = 250 32000 100 128 net.ipv4.ip_local_port_range = 9000 65500 net.core.rmem_default = 262144 net.core.rmem_max = 4194304 net.core.wmem_default = 262144 net.core.wmem_max = 1048586

|

使修改参数立即生效:

/sbin/sysctl -p

1.2.8.4 修改/etc/security/limits.conf文件

检查nofile

|

ulimit -Sn ulimit -Hn |

检查nproc

|

ulimit -Su ulimit -Hu |

检查stack

|

ulimit -Ss ulimit -Hs |

修改OS用户grid和oracle资源限制:

|

cp /etc/security/limits.conf /etc/security/limits.conf.`date +%Y%m%d` echo "grid soft nofile 1024 grid hard nofile 65536 grid soft stack 10240 grid hard stack 32768 grid soft nproc 2047 grid hard nproc 16384 oracle soft nofile 1024 oracle hard nofile 65536 oracle soft stack 10240 oracle hard stack 32768 oracle soft nproc 2047 oracle hard nproc 16384 root soft nproc 2047 " >> /etc/security/limits.conf |

1.2.8.5 修改/etc/pam.d/login文件

|

echo "session required pam_limits.so" >> /etc/pam.d/login |

more /etc/pam.d/login

1.2.8.6 修改/etc/profile文件

vi /etc/profile

|

if [ $USER = "oracle" ] || [ $USER = "grid" ]; then if [ $SHELL = "/bin/ksh" ]; then ulimit -p 16384 ulimit -n 65536 else ulimit -u 16384 -n 65536 fi umask 022 fi |

1.2.9 配置/dev/shm大小

vi /etc/fstab

|

tmpfs /dev/shm tmpfs defaults,size=2G 0 0

mount -o remount /dev/shm |

|

[root@raclhr-12cR1-N2 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/vg_rootlhr-Vol00 9.9G 4.9G 4.5G 53% / tmpfs 1000M 72K 1000M 1% /dev/shm /dev/sda1 194M 35M 150M 19% /boot /dev/mapper/vg_rootlhr-Vol01 3.0G 573M 2.3G 20% /tmp /dev/mapper/vg_rootlhr-Vol03 3.0G 69M 2.8G 3% /home /dev/mapper/vg_orasoft-lv_orasoft_u01 20G 6.8G 12G 37% /u01 .host:/ 331G 272G 59G 83% /mnt/hgfs /dev/mapper/vg_orasoft-lv_orasoft_soft 20G 172M 19G 1% /soft [root@raclhr-12cR1-N2 ~]# more /etc/fstab # # /etc/fstab # Created by anaconda on Sat Jan 14 18:56:24 2017 # # Accessible filesystems, by reference, are maintained under '/dev/disk' # See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info # /dev/mapper/vg_rootlhr-Vol00 / ext4 defaults 1 1 UUID=fccf51c1-2d2f-4152-baac-99ead8cfbc1a /boot ext4 defaults 1 2 /dev/mapper/vg_rootlhr-Vol01 /tmp ext4 defaults 1 2 /dev/mapper/vg_rootlhr-Vol02 swap swap defaults 0 0 tmpfs /dev/shm tmpfs defaults,size=2G 0 0 devpts /dev/pts devpts gid=5,mode=620 0 0 sysfs /sys sysfs defaults 0 0 proc /proc proc defaults 0 0 /dev/vg_rootlhr/Vol03 /home ext4 defaults 0 0 /dev/vg_orasoft/lv_orasoft_u01 /u01 ext4 defaults 0 0 [root@raclhr-12cR1-N2 ~]# mount -o remount /dev/shm [root@raclhr-12cR1-N2 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/vg_rootlhr-Vol00 9.9G 4.9G 4.5G 53% / tmpfs 2.0G 72K 2.0G 1% /dev/shm /dev/sda1 194M 35M 150M 19% /boot /dev/mapper/vg_rootlhr-Vol01 3.0G 573M 2.3G 20% /tmp /dev/mapper/vg_rootlhr-Vol03 3.0G 69M 2.8G 3% /home /dev/mapper/vg_orasoft-lv_orasoft_u01 20G 6.8G 12G 37% /u01 .host:/ 331G 272G 59G 83% /mnt/hgfs /dev/mapper/vg_orasoft-lv_orasoft_soft 20G 172M 19G 1% /soft

|

1.2.10 配置NTP

Network Time Protocol Setting

● You have two options for time synchronization: an operating system configured network time protocol (NTP), or Oracle Cluster Time Synchronization Service.

● Oracle Cluster Time Synchronization Service is designed for organizations whose cluster servers are unable to access NTP services.

● If you use NTP, then the Oracle Cluster Time Synchronization daemon (ctssd) starts up in observer mode. If you do not have NTP daemons, then ctssd starts up in active mode and synchronizes time among cluster members without contacting an external time server..

可以采用操作系统的NTP服务,也可以使用Oracle自带的服务ctss,如果ntp没有启用,Oracle会自动启用自己的ctssd进程。

从oracle 11gR2 RAC开始使用Cluster Time Synchronization Service(CTSS)同步各节点的时间,当安装程序发现NTP协议处于非活动状态时,安装集群时间同步服务将以活动模式自动进行安装并通过所有节点的时间。如果发现配置了 NTP,则以观察者模式启动集群时间同步服务,Oracle Clusterware 不会在集群中进行活动的时间同步。

root 用户双节点运行:

|

/sbin/service ntpd stop mv /etc/ntp.conf /etc/ntp.conf.bak service ntpd status chkconfig ntpd off |

1.3 配置共享存储

这个是重点,也是最容易出错的地方,这次是小麦苗第二次虚拟机上安装RAC环境,有的内容不再详述。

1.3.1 添加共享磁盘

1.3.1.1 第一步:创建共享磁盘

该步骤可以用cmd命令也可以用图形界面,本文采用命令行进行添加。

在cmd 中进入 WMware Workstation安装目录,执行命令创建磁盘:

|

C: cd C:/Program Files (x86)/VMware/VMware Workstation vmware-vdiskmanager.exe -c -s 6g -a lsilogic -t 2 "E:/My Virtual Machines/rac12cR1/sharedisk/ocr_vote.vmdk" vmware-vdiskmanager.exe -c -s 10g -a lsilogic -t 2 "E:/My Virtual Machines/rac12cR1/sharedisk/data.vmdk" vmware-vdiskmanager.exe -c -s 10g -a lsilogic -t 2 "E:/My Virtual Machines/rac12cR1/sharedisk/fra.vmdk"

|

|

D:/Users/xiaomaimiao>C:

C:/>cd C:/Program Files (x86)/VMware/VMware Workstation C:/Program Files (x86)/VMware/VMware Workstation>vmware-vdiskmanager.exe -c -s 6g -a lsilogic -t 2 "E:/My Virtual Machines/rac12cR1/sharedisk/ocr_vote.vmdk" Creating disk 'E:/My Virtual Machines/rac12cR1/sharedisk/ocr_vote.vmdk' Create: 100% done. Virtual disk creation successful.

C:/Program Files (x86)/VMware/VMware Workstation>vmware-vdiskmanager.exe -c -s 10g -a lsilogic -t 2 "E:/My Virtual Machines/rac12cR1/sharedisk/data.vmdk" Creating disk 'E:/My Virtual Machines/rac12cR1/sharedisk/data.vmdk' Create: 100% done. Virtual disk creation successful.

C:/Program Files (x86)/VMware/VMware Workstation>vmware-vdiskmanager.exe -c -s 10g -a lsilogic -t 2 "E:/My Virtual Machines/rac12cR1/sharedisk/fra.vmdk" Creating disk 'E:/My Virtual Machines/rac12cR1/sharedisk/fra.vmdk' Create: 100% done. Virtual disk creation successful.

|

注意:12c R1的OCR磁盘组最少需要5501MB的空间。

|

[INS-30515] Insufficient space available in the selected disks. Cause - Insufficient space available in the selected Disks. At least, 5,501 MB of free space is required. Action - Choose additional disks such that the total size should be at least 5,501 MB. |

1.3.1.2 第二步:修改配置文件

关闭两台虚拟机,用记事本打开 虚拟机名字.vmx,即打开配置文件,2个节点都需要修改。

添加以下内容,其中,scsix:y 表示第x个总线上的第y个设备:

|

#shared disks configure disk.EnableUUID="TRUE" disk.locking = "FALSE" scsi1.shared = "TRUE" diskLib.dataCacheMaxSize = "0" diskLib.dataCacheMaxReadAheadSize = "0" diskLib.dataCacheMinReadAheadSize = "0" diskLib.dataCachePageSize= "4096" diskLib.maxUnsyncedWrites = "0"

scsi1.present = "TRUE" scsi1.virtualDev = "lsilogic" scsil.sharedBus = "VIRTUAL" scsi1:0.present = "TRUE" scsi1:0.mode = "independent-persistent" scsi1:0.fileName = "../sharedisk/ocr_vote.vmdk" scsi1:0.deviceType = "disk" scsi1:0.redo = "" scsi1:1.present = "TRUE" scsi1:1.mode = "independent-persistent" scsi1:1.fileName = "../sharedisk/data.vmdk" scsi1:1.deviceType = "disk" scsi1:1.redo = "" scsi1:2.present = "TRUE" scsi1:2.mode = "independent-persistent" scsi1:2.fileName = "../sharedisk/fra.vmdk" scsi1:2.deviceType = "disk" scsi1:2.redo = ""

|

如果报有的参数不存在的错误,那么请将虚拟机的兼容性设置到Workstation 9.0

1.3.1.3 第三步:重新打开VMware Workstation

关闭 VMware Workstation 软件重新打开,此时看到共享磁盘正确加载则配置正确,这里尤其注意第二个节点,2个节点的硬盘配置和网络适配器的配置应该是一样的,若不一样请检查配置。

然后开启2台虚拟机。

|

[root@raclhr-12cR1-N1 ~]# fdisk -l | grep /dev/sd Disk /dev/sda: 21.5 GB, 21474836480 bytes /dev/sda1 * 1 26 204800 83 Linux /dev/sda2 26 1332 10485760 8e Linux LVM /dev/sda3 1332 2611 10279936 8e Linux LVM Disk /dev/sdb: 107.4 GB, 107374182400 bytes /dev/sdb1 1 1306 10485760 8e Linux LVM /dev/sdb2 1306 2611 10485760 8e Linux LVM /dev/sdb3 2611 3917 10485760 8e Linux LVM /dev/sdb4 3917 13055 73399296 5 Extended /dev/sdb5 3917 5222 10485760 8e Linux LVM /dev/sdb6 5223 6528 10485760 8e Linux LVM /dev/sdb7 6528 7834 10485760 8e Linux LVM /dev/sdb8 7834 9139 10485760 8e Linux LVM /dev/sdb9 9139 10445 10485760 8e Linux LVM /dev/sdb10 10445 11750 10485760 8e Linux LVM /dev/sdb11 11750 13055 10477568 8e Linux LVM Disk /dev/sdc: 6442 MB, 6442450944 bytes Disk /dev/sdd: 10.7 GB, 10737418240 bytes Disk /dev/sde: 10.7 GB, 10737418240 bytes [root@raclhr-12cR1-N1 ~]# fdisk -l | grep "Disk /dev/sd" Disk /dev/sda: 21.5 GB, 21474836480 bytes Disk /dev/sdb: 107.4 GB, 107374182400 bytes Disk /dev/sdc: 6442 MB, 6442450944 bytes Disk /dev/sdd: 10.7 GB, 10737418240 bytes Disk /dev/sde: 10.7 GB, 10737418240 bytes [root@raclhr-12cR1-N1 ~]#

|

1.3.2 设置共享磁盘

1.3.2.1 配置udev绑定的scsi_id

注意以下两点:

● 首先切换到root用户下

● 2个节点上获取的uuid应该是一样的,不一样的话说明之前的配置有问题

1、不同的操作系统,scsi_id命令的位置不同。

|

[root@raclhr-12cR1-N1 ~]# cat /etc/issue Red Hat Enterprise Linux Server release 6.5 (Santiago) Kernel /r on an /m

[root@raclhr-12cR1-N1 ~]# which scsi_id /sbin/scsi_id [root@raclhr-12cR1-N1 ~]#

|

注意:rhel 6之后只支持 --whitelisted --replace-whitespace 参数,之前的 -g -u -s 参数已经不支持了。

2、编辑 /etc/scsi_id.config 文件,如果该文件不存在,则创建该文件并添加如下行:

|

[root@raclhr-12cR1-N1 ~]# echo "options=--whitelisted --replace-whitespace" > /etc/scsi_id.config [root@raclhr-12cR1-N1 ~]# more /etc/scsi_id.config options=--whitelisted --replace-whitespace [root@raclhr-12cR1-N1 ~]# |

3、获取uuid

|

scsi_id --whitelisted --replace-whitespace --device=/dev/sdc scsi_id --whitelisted --replace-whitespace --device=/dev/sdd scsi_id --whitelisted --replace-whitespace --device=/dev/sde |

|

[root@raclhr-12cR1-N1 ~]# scsi_id --whitelisted --replace-whitespace --device=/dev/sdc 36000c29fd84cfe0767838541518ef8fe [root@raclhr-12cR1-N1 ~]# scsi_id --whitelisted --replace-whitespace --device=/dev/sdd 36000c29c0ac0339f4b5282b47c49285b [root@raclhr-12cR1-N1 ~]# scsi_id --whitelisted --replace-whitespace --device=/dev/sde 36000c29e4652a45192e32863956c1965

|

2个节点获取到的值应该是一样的。

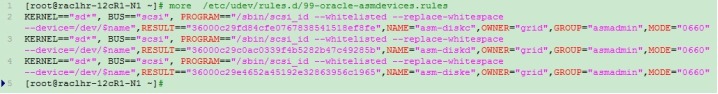

1.3.2.2 创建并配置udev rules文件

直接运行如下的脚本:

|

mv /etc/udev/rules.d/99-oracle-asmdevices.rules /etc/udev/rules.d/99-oracle-asmdevices.rules_bk for i in c d e ; do echo "KERNEL==/"sd*/", BUS==/"scsi/", PROGRAM==/"/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev//$name/",RESULT==/"`scsi_id --whitelisted --replace-whitespace --device=/dev/sd$i`/",NAME=/"asm-disk$i/",OWNER=/"grid/",GROUP=/"asmadmin/",MODE=/"0660/"" >> /etc/udev/rules.d/99-oracle-asmdevices.rules done start_udev

|

或使用如下的代码分步执行获取:

|

for i in c d e ; do echo "KERNEL==/"sd*/", BUS==/"scsi/", PROGRAM==/"/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev//$name/",RESULT==/"`scsi_id --whitelisted --replace-whitespace --device=/dev/sd$i`/",NAME=/"asm-disk$i/",OWNER=/"grid/",GROUP=/"asmadmin/",MODE=/"0660/"" done |

|

[root@raclhr-12cR1-N1 ~]# for i in c d e ; > do > echo "KERNEL==/"sd*/", BUS==/"scsi/", PROGRAM==/"/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev//$name/",RESULT==/"`scsi_id --whitelisted --replace-whitespace --device=/dev/sd$i`/",NAME=/"asm-disk$i/",OWNER=/"grid/",GROUP=/"asmadmin/",MODE=/"0660/"" > done KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name",RESULT=="36000c29fd84cfe0767838541518ef8fe",NAME="asm-diskc",OWNER="grid",GROUP="asmadmin",MODE="0660" KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name",RESULT=="36000c29c0ac0339f4b5282b47c49285b",NAME="asm-diskd",OWNER="grid",GROUP="asmadmin",MODE="0660" KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name",RESULT=="36000c29e4652a45192e32863956c1965",NAME="asm-diske",OWNER="grid",GROUP="asmadmin",MODE="0660" [root@raclhr-12cR1-N1 ~]#

|

编辑vi /etc/udev/rules.d/99-oracle-asmdevices.rules,加入上边的脚本生成的内容。

这里需要注意,一个KERNEL就是一行,不能换行的。

查看是否配置结果:

[root@raclhr-12cR1-N1 ~]# ll /dev/asm*

ls: cannot access /dev/asm*: No such file or directory

[root@raclhr-12cR1-N1 ~]# start_udev

Starting udev: [ OK ]

[root@raclhr-12cR1-N1 ~]# ll /dev/asm*

brw-rw---- 1 grid asmadmin 8, 32 Jan 16 16:17 /dev/asm-diskc

brw-rw---- 1 grid asmadmin 8, 48 Jan 16 16:17 /dev/asm-diskd

brw-rw---- 1 grid asmadmin 8, 64 Jan 16 16:17 /dev/asm-diske

[root@raclhr-12cR1-N1 ~]#

重启服务:

|

/sbin/udevcontrol reload_rules /sbin/start_udev |

检查:

|

udevadm info --query=all --name=asm-diskc udevadm info --query=all --name=asm-diskd udevadm info --query=all --name=asm-diske |

整个执行过程:

|

[root@raclhr-12cR1-N1 ~]# fdisk -l | grep "Disk /dev/sd" Disk /dev/sda: 21.5 GB, 21474836480 bytes Disk /dev/sdb: 107.4 GB, 107374182400 bytes Disk /dev/sdc: 6442 MB, 6442450944 bytes Disk /dev/sdd: 10.7 GB, 10737418240 bytes Disk /dev/sde: 10.7 GB, 10737418240 bytes [root@raclhr-12cR1-N1 ~]# mv /etc/udev/rules.d/99-oracle-asmdevices.rules /etc/udev/rules.d/99-oracle-asmdevices.rules_bk [root@raclhr-12cR1-N1 ~]# for i in c d e ; > do > echo "KERNEL==/"sd*/", BUS==/"scsi/", PROGRAM==/"/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev//$name/",RESULT==/"`scsi_id --whitelisted --replace-whitespace --device=/dev/sd$i`/",NAME=/"asm-disk$i/",OWNER=/"grid/",GROUP=/"asmadmin/",MODE=/"0660/"" >> /etc/udev/rules.d/99-oracle-asmdevices.rules > done [root@raclhr-12cR1-N1 ~]# more /etc/udev/rules.d/99-oracle-asmdevices.rules KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name",RESULT=="36000c29fd84cfe0767838541518ef8fe",NAME="asm-diskc",OWNER="grid",GROUP="asmadmin",MODE="0660" KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name",RESULT=="36000c29c0ac0339f4b5282b47c49285b",NAME="asm-diskd",OWNER="grid",GROUP="asmadmin",MODE="0660" KERNEL=="sd*", BUS=="scsi", PROGRAM=="/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/$name",RESULT=="36000c29e4652a45192e32863956c1965",NAME="asm-diske",OWNER="grid",GROUP="asmadmin",MODE="0660" [root@raclhr-12cR1-N1 ~]# [root@raclhr-12cR1-N1 ~]# fdisk -l | grep "Disk /dev/sd" Disk /dev/sda: 21.5 GB, 21474836480 bytes Disk /dev/sdb: 107.4 GB, 107374182400 bytes Disk /dev/sdc: 6442 MB, 6442450944 bytes Disk /dev/sdd: 10.7 GB, 10737418240 bytes Disk /dev/sde: 10.7 GB, 10737418240 bytes [root@raclhr-12cR1-N1 ~]# start_udev Starting udev: [ OK ] [root@raclhr-12cR1-N1 ~]# fdisk -l | grep "Disk /dev/sd" Disk /dev/sda: 21.5 GB, 21474836480 bytes Disk /dev/sdb: 107.4 GB, 107374182400 bytes [root@raclhr-12cR1-N1 ~]# |

1.4 软件包准备

1.4.1 创建文件系统

在节点1创建文件系统/soft,准备20G的空间用作Oracle和grid的软件解压目录。

|

vgextend vg_orasoft /dev/sdb5 /dev/sdb6 lvcreate -n lv_orasoft_soft -L 20G vg_orasoft mkfs.ext4 /dev/vg_orasoft/lv_orasoft_soft mkdir /soft mount /dev/vg_orasoft/lv_orasoft_soft /soft

|

|

[root@raclhr-12cR1-N2 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/vg_rootlhr-Vol00 9.9G 4.9G 4.5G 52% / tmpfs 1000M 72K 1000M 1% /dev/shm /dev/sda1 194M 35M 150M 19% /boot /dev/mapper/vg_rootlhr-Vol01 3.0G 70M 2.8G 3% /tmp /dev/mapper/vg_rootlhr-Vol03 3.0G 69M 2.8G 3% /home /dev/mapper/vg_orasoft-lv_orasoft_u01 20G 172M 19G 1% /u01 .host:/ 331G 234G 97G 71% /mnt/hgfs /dev/mapper/vg_orasoft-lv_orasoft_soft 20G 172M 19G 1% /soft [root@raclhr-12cR1-N2 ~]#

|

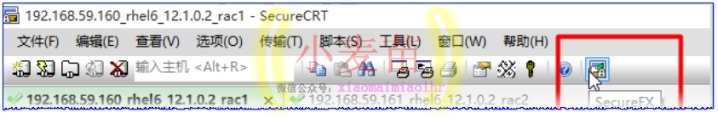

1.4.2 上传并解压软件

打开SecureFX软件:

复制粘贴文件到/soft目录下并等待上传完成:

|

[root@raclhr-12cR1-N1 ~]# ll -h /soft/p* -rw-r--r-- 1 root root 1.6G Jan 14 03:28 /soft/p17694377_121020_Linux-x86-64_1of8.zip -rw-r--r-- 1 root root 968M Jan 14 03:19 /soft/p17694377_121020_Linux-x86-64_2of8.zip -rw-r--r-- 1 root root 1.7G Jan 14 03:47 /soft/p17694377_121020_Linux-x86-64_3of8.zip -rw-r--r-- 1 root root 617M Jan 14 03:00 /soft/p17694377_121020_Linux-x86-64_4of8.zip [root@raclhr-12cR1-N1 ~]# |

开2个窗口分别执行如下命令进行解压安装包:

|

unzip /soft/p17694377_121020_Linux-x86-64_1of8.zip -d /soft/ && unzip /soft/p17694377_121020_Linux-x86-64_2of8.zip -d /soft/ unzip /soft/p17694377_121020_Linux-x86-64_3of8.zip -d /soft/ && unzip /soft/p17694377_121020_Linux-x86-64_4of8.zip -d /soft/ |

1和2是database安装包,3和4是grid的安装包。

解压完成后:

|

[root@raclhr-12cR1-N1 ~]# cd /soft [root@raclhr-12cR1-N1 soft]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/vg_rootlhr-Vol00 9.9G 4.9G 4.5G 52% / tmpfs 1000M 72K 1000M 1% /dev/shm /dev/sda1 194M 35M 150M 19% /boot /dev/mapper/vg_rootlhr-Vol01 3.0G 70M 2.8G 3% /tmp /dev/mapper/vg_rootlhr-Vol03 3.0G 69M 2.8G 3% /home /dev/mapper/vg_orasoft-lv_orasoft_u01 20G 172M 19G 1% /u01 .host:/ 331G 234G 97G 71% /mnt/hgfs /dev/mapper/vg_orasoft-lv_orasoft_soft 20G 11G 8.6G 54% /soft [root@raclhr-12cR1-N1 soft]# du -sh ./* 2.8G ./database 2.5G ./grid 16K ./lost+found 1.6G ./p17694377_121020_Linux-x86-64_1of8.zip 968M ./p17694377_121020_Linux-x86-64_2of8.zip 1.7G ./p17694377_121020_Linux-x86-64_3of8.zip 618M ./p17694377_121020_Linux-x86-64_4of8.zip [root@raclhr-12cR1-N1 soft]#

|

1.5 安装前预检查

1.5.1 安装补丁包(cvuqdisk)

在安装12cR1 GRID RAC之前,经常会需要运行集群验证工具CVU(Cluster Verification Utility),该工具执行系统检查,确认当前的配置是否满足要求。

首先判断是否安装了cvuqdisk包:

|

rpm -qa cvuqdisk |

如果没有安装,那么在2个节点上都执行如下命令进行安装该包:

|

export CVUQDISK_GRP=oinstall cd /soft/grid/rpm/ rpm -ivh cvuqdisk-1.0.9-1.rpm |

|

[root@raclhr-12cR1-N1 soft]# cd /soft/grid/ [root@raclhr-12cR1-N1 grid]# ll total 80 drwxr-xr-x 4 root root 4096 Jan 16 17:04 install -rwxr-xr-x 1 root root 34132 Jul 11 2014 readme.html drwxrwxr-x 2 root root 4096 Jul 7 2014 response drwxr-xr-x 2 root root 4096 Jul 7 2014 rpm -rwxr-xr-x 1 root root 5085 Dec 20 2013 runcluvfy.sh -rwxr-xr-x 1 root root 8534 Jul 7 2014 runInstaller drwxrwxr-x 2 root root 4096 Jul 7 2014 sshsetup drwxr-xr-x 14 root root 4096 Jul 7 2014 stage -rwxr-xr-x 1 root root 500 Feb 7 2013 welcome.html [root@raclhr-12cR1-N1 grid]# cd rpm [root@raclhr-12cR1-N1 rpm]# ll total 12 -rwxr-xr-x 1 root root 8976 Jul 1 2014 cvuqdisk-1.0.9-1.rpm [root@raclhr-12cR1-N1 rpm]# export CVUQDISK_GRP=oinstall [root@raclhr-12cR1-N1 rpm]# cd /soft/grid/rpm/ [root@raclhr-12cR1-N1 rpm]# rpm -ivh cvuqdisk-1.0.9-1.rpm Preparing... ########################################### [100%] 1:cvuqdisk ########################################### [100%] [root@raclhr-12cR1-N1 rpm]# rpm -qa cvuqdisk cvuqdisk-1.0.9-1.x86_64 [root@raclhr-12cR1-N1 rpm]# [root@raclhr-12cR1-N1 sshsetup]# ls -l /usr/sbin/cvuqdisk -rwsr-xr-x 1 root oinstall 11920 Jul 1 2014 /usr/sbin/cvuqdisk [root@raclhr-12cR1-N1 sshsetup]#

|

传输到第2个节点上进行安装:

|

scp cvuqdisk-1.0.9-1.rpm root@192.168.59.161:/tmp export CVUQDISK_GRP=oinstall rpm -ivh /tmp/cvuqdisk-1.0.9-1.rpm |

1.5.2 配SSH互信,建立ssh等效性

所谓用户等价,就是以Oracle用户从一个节点连接到另一个节点时,不需要输入密码。Oracle GI和DB的安装过程都是先在一个节点安装,然后安装程序自动把本地安装好的内容复制到远程相同的目录下,这是一个后台拷贝过程,用户没有机会输入密码验证身份,必须进行配置。

虽然在安装软件的过程中,Oracle会自动配置SSH对等性,不过还是建议在安装软件之前手工配置。

为ssh和scp创建连接,检验是否存在:

ls -l /usr/local/bin/ssh

ls -l /usr/local/bin/scp

不存在则创建:

|

/bin/ln -s /usr/bin/ssh /usr/local/bin/ssh /bin/ln -s /usr/bin/scp /usr/local/bin/scp |

另外需要说明的是,配置了ssh后也经常有连接拒绝的情况,多数情况下是由于/etc/ssh/ssh_config、/etc/hosts.allow和/etc/hosts.deny这3个文件的问题。

1、/etc/ssh/ssh_config文件中加入GRID及Oracle用户所在的组:

|

AllowGroups sysadmin asmdba oinstall |

2、修改vi /etc/hosts.deny文件,用#注释掉sshd:ALL,或者加入ssh:ALL EXCEPT 2个节点的公网及2个节点的私网,中间用逗号隔开,如:

|

ssd : ALL EXCEPT 192.168.59.128,192.168.59.129,10.10.10.5,10.10.10.6 |

也可以修改:/etc/hosts.allow文件,加入sshd:ALL或

|

sshd:192.168.59.128,192.168.59.129,10.10.10.5,10.10.10.6 |

若2个文件的配置有冲突以/etc/hosts.deny为准。

3、重启ssd服务:/etc/init.d/sshd restart

1.5.2.1 方法一:普通配置

分别配置grid和oracle用户的ssh

----------------------------------------------------------------------------------

|

[oracle@ZFLHRDB1 ~]$ ssh ZFLHRDB1 date [oracle@ZFLHRDB1 ~]$ ssh ZFLHRDB2 date [oracle@ZFLHRDB1 ~]$ ssh ZFLHRDB1-priv date [oracle@ZFLHRDB1 ~]$ ssh ZFLHRDB2-priv date

[oracle@ZFLHRDB2 ~]$ ssh ZFLHRDB1 date [oracle@ZFLHRDB2 ~]$ ssh ZFLHRDB2 date [oracle@ZFLHRDB2 ~]$ ssh ZFLHRDB1-priv date [oracle@ZFLHRDB2 ~]$ ssh ZFLHRDB2-priv date |

-----------------------------------------------------------------------------------

|

[oracle@ZFLHRDB1 ~]$ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys [oracle@ZFLHRDB1 ~]$ cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys [oracle@ZFLHRDB1 ~]$ ssh ZFLHRDB2 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys ->输入ZFLHRDB2密码 [oracle@ZFLHRDB1 ~]$ ssh ZFLHRDB2 cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys ->输入ZFLHRDB2密码 [oracle@ZFLHRDB1 ~]$ scp ~/.ssh/authorized_keys ZFLHRDB2:~/.ssh/authorized_keys ->输入ZFLHRDB2密码

|

-----------------------------------------------------------------------------------

测试两节点连通性:

|

[oracle@ZFLHRDB1 ~]$ ssh ZFLHRDB1 date [oracle@ZFLHRDB1 ~]$ ssh ZFLHRDB2 date [oracle@ZFLHRDB1 ~]$ ssh ZFLHRDB1-priv date [oracle@ZFLHRDB1 ~]$ ssh ZFLHRDB2-priv date

[oracle@ZFLHRDB2 ~]$ ssh ZFLHRDB1 date [oracle@ZFLHRDB2 ~]$ ssh ZFLHRDB2 date [oracle@ZFLHRDB2 ~]$ ssh ZFLHRDB1-priv date [oracle@ZFLHRDB2 ~]$ ssh ZFLHRDB2-priv date

|

第二次执行时不再提示输入口令,并且可以成功执行命令,则表示SSH对等性配置成功。

1.5.2.2 方法二:用sshUserSetup.sh快速创建互信(推荐方法)

sshUserSetup.sh在GI安装介质解压缩后的sshsetup目录下。下面两条命令在一个节点上执行即可,在root用户下执行:

|

./sshUserSetup.sh -user grid -hosts "raclhr-12cR1-N2 raclhr-12cR1-N1" -advanced exverify –confirm ./sshUserSetup.sh -user oracle -hosts "raclhr-12cR1-N2 raclhr-12cR1-N1" -advanced exverify -confirm |

输入yes及密码一路回车即可。

|

[oracle@raclhr-12cR1-N1 grid]$ ll total 80 drwxr-xr-x 4 root root 4096 Jan 16 17:04 install -rwxr-xr-x 1 root root 34132 Jul 11 2014 readme.html drwxrwxr-x 2 root root 4096 Jul 7 2014 response drwxr-xr-x 2 root root 4096 Jul 7 2014 rpm -rwxr-xr-x 1 root root 5085 Dec 20 2013 runcluvfy.sh -rwxr-xr-x 1 root root 8534 Jul 7 2014 runInstaller drwxrwxr-x 2 root root 4096 Jul 7 2014 sshsetup drwxr-xr-x 14 root root 4096 Jul 7 2014 stage -rwxr-xr-x 1 root root 500 Feb 7 2013 welcome.html [oracle@raclhr-12cR1-N1 grid]$ cd sshsetup/ [oracle@raclhr-12cR1-N1 sshsetup]$ ll total 32 -rwxr-xr-x 1 root root 32334 Jun 7 2013 sshUserSetup.sh [oracle@raclhr-12cR1-N1 sshsetup]$ pwd /soft/grid/sshsetup |

|

ssh raclhr-12cR1-N1 date ssh raclhr-12cR1-N2 date ssh raclhr-12cR1-N1-priv date ssh raclhr-12cR1-N2-priv date ssh-agent $SHELL ssh-add

|

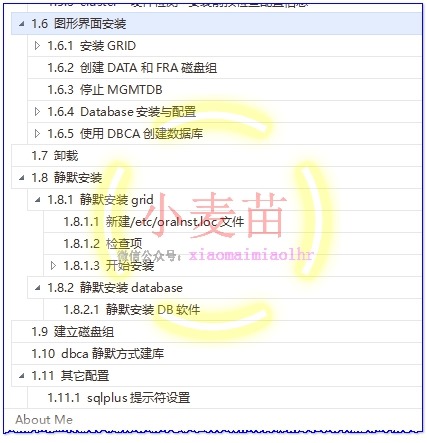

1.5.3 cluster 硬件检测--安装前预检查配置信息

Use Cluster Verification Utility (cvu)

Before installing Oracle Clusterware, use CVU to ensure that your cluster is prepared for an installation:

Oracle provides CVU to perform system checks in preparation for an installation, patch updates, or other system changes. In addition, CVU can generate fixup scripts that can change many kernel parameters to at lease the minimum settings required for a successful installation.

Using CVU can help system administrators, storage administrators, and DBA to ensure that everyone has completed the system configuration and preinstallation steps.

./runcluvfy.sh -help

./runcluvfy.sh stage -pre crsinst -n rac1,rac2 –fixup -verbose

Install the operating system package cvuqdisk to both Oracle RAC nodes. Without cvuqdisk, Cluster Verification Utility cannot discover shared disks, and you will receive the error message "Package cvuqdisk not installed" when the Cluster Verification Utility is run (either manually or at the end of the Oracle grid infrastructure installation). Use the cvuqdisk RPM for your hardware architecture (for example, x86_64 or i386). The cvuqdisk RPM can be found on the Oracle grid infrastructure installation media in the rpm directory. For the purpose of this article, the Oracle grid infrastructure media was extracted to the /home/grid/software/oracle/grid directory on racnode1 as the grid user.

只需要在其中一个节点上运行即可

在安装 GRID 之前,建议先利用 CVU(Cluster Verification Utility)检查 CRS 的安装前环境。以grid用户运行:

|

export CVUQDISK_GRP=oinstall ./runcluvfy.sh stage -pre crsinst -n rac1,rac2 -fixup -verbose $ORACLE_HOME/bin/cluvfy stage -pre crsinst -n all -verbose -fixup |

未检测通过的显示为failed,有的failed可以根据提供的脚本进行修复。有的需要根据情况进行修复,有的failed也可以忽略。

|

[root@raclhr-12cR1-N1 grid]# su - grid [grid@raclhr-12cR1-N1 ~]$ cd /soft/grid/ [grid@raclhr-12cR1-N1 grid]$ ./runcluvfy.sh stage -pre crsinst -n raclhr-12cR1-N1,raclhr-12cR1-N2 -fixup -verbose |

小麦苗的环境有如下3个failed:

|

Check: Total memory Node Name Available Required Status ------------ ------------------------ ------------------------ ---------- raclhr-12cr1-n2 1.9518GB (2046592.0KB) 4GB (4194304.0KB) failed raclhr-12cr1-n1 1.9518GB (2046592.0KB) 4GB (4194304.0KB) failed Result: Total memory check failed

Check: Swap space Node Name Available Required Status ------------ ------------------------ ------------------------ ---------- raclhr-12cr1-n2 2GB (2097144.0KB) 2.9277GB (3069888.0KB) failed raclhr-12cr1-n1 2GB (2097144.0KB) 2.9277GB (3069888.0KB) failed Result: Swap space check failed

Checking integrity of file "/etc/resolv.conf" across nodes PRVF-5600 : On node "raclhr-12cr1-n2" The following lines in file "/etc/resolv.conf" could not be parsed as they are not in proper format: raclhr-12cr1-n2 PRVF-5600 : On node "raclhr-12cr1-n1" The following lines in file "/etc/resolv.conf" could not be parsed as they are not in proper format: raclhr-12cr1-n1 Check for integrity of file "/etc/resolv.conf" failed

|

都可以忽略。

About Me

...............................................................................................................................

● 本文作者:小麦苗,只专注于数据库的技术,更注重技术的运用

● 本文在itpub(http://blog.itpub.net/26736162)、博客园(http://www.cnblogs.com/lhrbest)和个人微信公众号(xiaomaimiaolhr)上有同步更新

● 本文itpub地址:http://blog.itpub.net/26736162/viewspace-2132768/

● 本文博客园地址:http://www.cnblogs.com/lhrbest/p/6337496.html

● 本文pdf版及小麦苗云盘地址:http://blog.itpub.net/26736162/viewspace-1624453/

● QQ群:230161599 微信群:私聊

● 联系我请加QQ好友(642808185),注明添加缘由

● 于 2017-01-12 08:00 ~ 2016-01-21 24:00 在农行完成

● 文章内容来源于小麦苗的学习笔记,部分整理自网络,若有侵权或不当之处还请谅解

● 版权所有,欢迎分享本文,转载请保留出处

...............................................................................................................................

拿起手机使用微信客户端扫描下边的左边图片来关注小麦苗的微信公众号:xiaomaimiaolhr,扫描右边的二维码加入小麦苗的QQ群,学习最实用的数据库技术。

- 本文标签: mapper 测试 VMware 数据库 UI 空间 实例 操作系统 core SecureFX 2015 云 ORM 协议 mail CDN Oracle 配置 注释 Select 参数 src SecureCRT 下载 linux root id db git description 安装 时间 DOM REST lib 博客 文章 Service 文件系统 node iptables grep map 代码 parse 软件 dist fstab python Action zip AOP db2 struct find sql 管理 HTML 二维码 build http Architect Security update IO cmd 网卡 nfs 集群 IDE App list example 主机 Enterprise queue ssh 图片 tab ip swap cat redis operating system value QQ群 ask ACE message rmi cache rpm shell tar 进程 数据 CTO key 同步 DDL Word 微信公众号 目录

- 版权声明: 本文为互联网转载文章,出处已在文章中说明(部分除外)。如果侵权,请联系本站长删除,谢谢。

- 本文海报: 生成海报一 生成海报二

![[HBLOG]公众号](https://www.liuhaihua.cn/img/qrcode_gzh.jpg)