opencv在android平台下的开发【4】-图像滤波详解

前言

在上一篇 opencv-android-图像平滑处理 文章中,简单介绍了几种图像平滑,也就是图像模糊的方法,使用了几个简单的滤波器,这都属于图像的滤波操作。

opencv针对图像的处理提供了imgproc模块,比如图像滤波、几何变换,特征识别等等,本文针对opencv的图像滤波做一个全面的分析。

滤波方法一览

opencv的图像滤波中主要有以下方法,用于对2D图像执行线性/非线性的滤波操作。

- bilateralFilter:对图像使用双边滤波

- blur:使用归一化块滤波器模糊图像

- boxFilter:使用箱式滤波器模糊图像

- buildPyramid:构建影像高斯金字塔

- dilate:使用指定的 structuring element 膨胀图像

- erode:使用指定的 structuring element 腐蚀图像

- filter2D:使用 kernel 对图像进行卷积

- GaussianBlur:使用高斯滤波器模糊图像

- getDerivKernels:返回用于计算空间图像导数(spatial image derivatives)的滤波系数

- getGaborKernel:返回 Gabor 滤波系数

- getGaussianKernel:返回高斯滤波系数

- getStructuringElement:返回指定大小和形状的形态学操作的structuring element

- Laplacian:计算图像的拉普拉斯算子

- medianBlur:使用中值滤波模糊图像

- morphologyDefaultBorderValue:为图像膨胀和腐蚀返回“magic”边界值。可以为膨胀操作自动转换为 Scalar::all(-DBL_MAX)。

- morphologyEx:执行增强的形态学变换

- pyrDown:模糊图像并向下采样

- pyrMeanShiftFiltering:执行图像meanshift分割的初始步骤

- pyrUp:向上采样,然后模糊图像

- Scharr:使用 Scharr 算子计算图像的 X-或者Y-的一阶导数

- sepFilter2D:对图像应用可分离的线性滤波器。

- Sobel:使用Sobel算子计算图像的一阶、二阶、三阶、混合导数

- spatialGradient:使用Sobel算子计算图像X和Y的一阶连续偏导

- sqrBoxFilter:计算与滤波器重叠的像素值的归一化平方和

对上述方法根据功能做简单的分类。

- 图像模糊相关:blur、bilateralFilter、boxFilter、GaussianBlur、medianBlur、sqrBoxFilter。

- 形态学运算相关:dilate、erode、morphologyDefaultBorderValue、morphologyEx

- 图像金字塔相关:buildPyramid、pyrDown、pyrUp、pyrMeanShiftFiltering

- 卷积相关:filter2D、sepFilter2D

- 核/结构元素相关:getDerivKernels、getGaborKernel、getGaussianKernel、getStructuringElement

- 算子:Laplacian

- 导数:Scharr、Sobel、spatialGradient

源图像(一般为矩形)中每个位置(x,y)的像素的邻域像素都会被用来计算其结果像素值。使用线性滤波器时,结果像素值时邻域像素的加权和;使用形态学操作时,结果像素值时邻域像素的最大值或者最小值。计算的结果存储在输出图像相应的位置(x,y),输出图像和源图像的大小相同,而且下面这些方法都支持多通道数组,每个通道都会独立处理,所以输出图像和源图像具有相同的通道数。

另外,与简单的算术方法不同,上述方法需要外推一些不存在的像素的值。例如使用高斯3X3滤波器平滑图像,每一行最左侧的像素计算时需要用到左侧的像素值,但是其左侧没有像素了,所以可以另不存在的像素都是零,或者让其与最左侧的像素相同,opencv可以指定外推方法。

方法详解

bilateralFilter

方法声明:

c++

void cv::bilateralFilter ( InputArray src,

OutputArray dst,

int d,

double sigmaColor,

double sigmaSpace,

int borderType = BORDER_DEFAULT

)

复制代码

java

public static void bilateralFilter(Mat src, Mat dst, int d, double sigmaColor, double sigmaSpace)

{

bilateralFilter_1(src.nativeObj, dst.nativeObj, d, sigmaColor, sigmaSpace);

return;

}

private static native void bilateralFilter_0(long src_nativeObj, long dst_nativeObj, int d, double sigmaColor, double sigmaSpace, int borderType);

private static native void bilateralFilter_1(long src_nativeObj, long dst_nativeObj, int d, double sigmaColor, double sigmaSpace);

复制代码

给图像应用双边滤波,可以在保留边界的同时很好的减少噪声,但是执行速度比其他滤波器要慢。

该过滤器不是原地工作的。

Sigma 值:简单的话,可以将两个sigma值设为相同。当值比较小(<10)时,看起来没什么效果;当比较大(>150)时,效果比较强,图像看起来像动画片。

Filter size(d):比较大(d>5)时,计算比较慢。所以在实时应用场景下,推荐使用d=5;在离线应用强力过滤噪声时可以将d=9 。

- src:数据类型为 8-bit 或者 floating-point 的 1-channel(1通道)或者3-channel(3通道)的源图像。

- dst:和源图像具有相同大小和类型的目标图像。

- d:滤波时像素邻域的直径,非正值,由 sigmaSpace 计算得出。

- sigmaColor:颜色空间的滤波 sigma。参数值越大,意味着像素邻域中相距越远的颜色也会融入进来,从而产生更大的半等颜色。

- sigmaSpace:坐标空间的滤波 sigma。参数值越大,表示更远的像素之间,只要颜色足够接近(参看sigmaColor),也会彼此影响。当 d=0时,表示邻域的大小忽略sigmaSpace,否则d与sigmaSpace成正比。

- borderType:图像边界外像素值的外推模式。

blur

方法声明:

c++

void cv::blur ( InputArray src,

OutputArray dst,

Size ksize,

Point anchor = Point(-1,-1),

int borderType = BORDER_DEFAULT

)

复制代码

java

public static void blur(Mat src, Mat dst, Size ksize)

{

blur_2(src.nativeObj, dst.nativeObj, ksize.width, ksize.height);

return;

}

private static native void blur_0(long src_nativeObj, long dst_nativeObj, double ksize_width, double ksize_height, double anchor_x, double anchor_y, int borderType);

private static native void blur_1(long src_nativeObj, long dst_nativeObj, double ksize_width, double ksize_height, double anchor_x, double anchor_y);

private static native void blur_2(long src_nativeObj, long dst_nativeObj, double ksize_width, double ksize_height);

复制代码

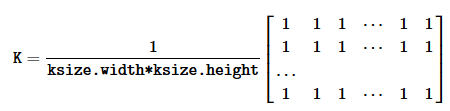

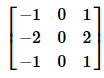

使用归一化块滤波器模糊图像,方法平滑图像使用的kernel如下:

调用方法 blur(src, dst, ksize, anchor, borderType)

等价于调用方法 boxFilter(src, dst, src.type(), anchor, true, borderType)

- src:输入图像,可以有任意数量的通道,各通道会被独立的处理,但是 depth 要求为 CV_8U,CV_16U,CV_16S,CV_32F,CV_64F。

- dest:输出图像,和输入图像有一样的大小和类型。

- ksize:模糊核(kernel)大小。

- anchor:锚点,默认是Point(-1,-1),表示锚点位于核的中心。

- borderType:图像边界外像素值的外推模式。

boxFilter

方法声明:

c++

void cv::boxFilter ( InputArray src,

OutputArray dst,

int ddepth,

Size ksize,

Point anchor = Point(-1,-1),

bool normalize = true,

int borderType = BORDER_DEFAULT

)

复制代码

java

//

// C++: void cv::boxFilter(Mat src, Mat& dst, int ddepth, Size ksize, Point anchor = Point(-1,-1), bool normalize = true, int borderType = BORDER_DEFAULT)

//

//javadoc: boxFilter(src, dst, ddepth, ksize, anchor, normalize, borderType)

public static void boxFilter(Mat src, Mat dst, int ddepth, Size ksize, Point anchor, boolean normalize, int borderType)

{

boxFilter_0(src.nativeObj, dst.nativeObj, ddepth, ksize.width, ksize.height, anchor.x, anchor.y, normalize, borderType);

return;

}

//javadoc: boxFilter(src, dst, ddepth, ksize, anchor, normalize)

public static void boxFilter(Mat src, Mat dst, int ddepth, Size ksize, Point anchor, boolean normalize)

{

boxFilter_1(src.nativeObj, dst.nativeObj, ddepth, ksize.width, ksize.height, anchor.x, anchor.y, normalize);

return;

}

//javadoc: boxFilter(src, dst, ddepth, ksize, anchor)

public static void boxFilter(Mat src, Mat dst, int ddepth, Size ksize, Point anchor)

{

boxFilter_2(src.nativeObj, dst.nativeObj, ddepth, ksize.width, ksize.height, anchor.x, anchor.y);

return;

}

//javadoc: boxFilter(src, dst, ddepth, ksize)

public static void boxFilter(Mat src, Mat dst, int ddepth, Size ksize)

{

boxFilter_3(src.nativeObj, dst.nativeObj, ddepth, ksize.width, ksize.height);

return;

}

// C++: void cv::boxFilter(Mat src, Mat& dst, int ddepth, Size ksize, Point anchor = Point(-1,-1), bool normalize = true, int borderType = BORDER_DEFAULT)

private static native void boxFilter_0(long src_nativeObj, long dst_nativeObj, int ddepth, double ksize_width, double ksize_height, double anchor_x, double anchor_y, boolean normalize, int borderType);

private static native void boxFilter_1(long src_nativeObj, long dst_nativeObj, int ddepth, double ksize_width, double ksize_height, double anchor_x, double anchor_y, boolean normalize);

private static native void boxFilter_2(long src_nativeObj, long dst_nativeObj, int ddepth, double ksize_width, double ksize_height, double anchor_x, double anchor_y);

private static native void boxFilter_3(long src_nativeObj, long dst_nativeObj, int ddepth, double ksize_width, double ksize_height);

复制代码

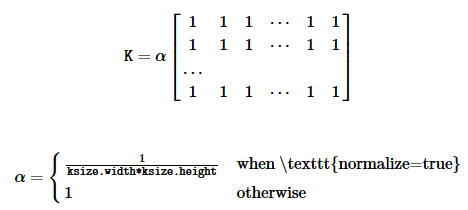

使用 box 滤波器模糊图像,使用的核如下:

非归一化的box滤波器在计算像素邻域的各种积分特性很有用,例如图像导数的协方差矩阵(用于密集光流算法等)。如果计算可变大小窗口里的像素总和,需要使用积分。

- src:输入图像。

- dst:输入图像,核输入图像有相同的大小和类型。

- ddepth:输出图像的depth(值为-1表示使用src.depth())。

- ksize:模糊核(kernel)大小。

- anchor:锚点,默认是Point(-1,-1),表示锚点位于核的中心。

- mormalize:标识,指定kernel是否按其区域归一化。

- borderType:图像边界外像素值的外推模式。

buildPyramid

只有C++的实现,暂未提供其他语言接口。

方法声明:

c++

void cv::buildPyramid ( InputArray src,

OutputArrayOfArrays dst,

int maxlevel,

int borderType = BORDER_DEFAULT

)

复制代码

该方法构造一个图像矢量,并通过从 dst[0] == src 开始递归地将 pyrDown(向下采样)应用与先前构建地金字塔图层来构建图像的高斯金字塔。

- src:源图像。需要满足 pyrDown(向下采样)支持类型地校验。

- dst:由 maxlevel+1 个和源图像具有相同类型的图像构成的目标图像矢量,dst[0] 和源图像相同,dst[1]是下一个金字塔图层(经过平滑处理和尺寸缩小)。

- maxlevel:金字塔最大层级,非负值,从0开始。

- borderType:图像边界外像素值的外推模式。

dilate

方法声明:

c++

void cv::dilate ( InputArray src,

OutputArray dst,

InputArray kernel,

Point anchor = Point(-1,-1),

int iterations = 1,

int borderType = BORDER_CONSTANT,

const Scalar & borderValue = morphologyDefaultBorderValue()

)

复制代码

java

public static void dilate(Mat src, Mat dst, Mat kernel)

{

dilate_4(src.nativeObj, dst.nativeObj, kernel.nativeObj);

return;

}

// C++: void cv::dilate(Mat src, Mat& dst, Mat kernel, Point anchor = Point(-1,-1), int iterations = 1, int borderType = BORDER_CONSTANT, Scalar borderValue = morphologyDefaultBorderValue())

private static native void dilate_0(long src_nativeObj, long dst_nativeObj, long kernel_nativeObj, double anchor_x, double anchor_y, int iterations, int borderType, double borderValue_val0, double borderValue_val1, double borderValue_val2, double borderValue_val3);

private static native void dilate_1(long src_nativeObj, long dst_nativeObj, long kernel_nativeObj, double anchor_x, double anchor_y, int iterations, int borderType);

private static native void dilate_2(long src_nativeObj, long dst_nativeObj, long kernel_nativeObj, double anchor_x, double anchor_y, int iterations);

private static native void dilate_3(long src_nativeObj, long dst_nativeObj, long kernel_nativeObj, double anchor_x, double anchor_y);

private static native void dilate_4(long src_nativeObj, long dst_nativeObj, long kernel_nativeObj);

复制代码

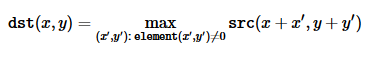

使用指定的 structuring element 膨胀图像,structuring element用来确定采用最大值的像素邻域的形状。

该方法支持就地模式。膨胀可以多次(迭代)应用。对于多通道图像,每个通道被单独处理。

- src:输入图像,通道数可以是任意的,但是 depth 需要是 CV_8U,CV_16U,CV_16S,CV_32F,CV64F 中的一种。

- dst:输出图像,和输入图像具有相同的大小和类型。

- kernel:膨胀用到的 structuring element,如果 element = Mat(),将会使用 3x3 的矩形 structuring element。 kernel 可以使用 getStructuringElement 方法创建。

- anchor:锚点,默认是Point(-1,-1),表示锚点位于核的中心。

- iterations:膨胀应用的次数。

- borderType:图像边界外像素值的外推模式。

- borderValue:当边界为常量时的边界值。

erode

方法声明

c++

void cv::erode ( InputArray src,

OutputArray dst,

InputArray kernel,

Point anchor = Point(-1,-1),

int iterations = 1,

int borderType = BORDER_CONSTANT,

const Scalar & borderValue = morphologyDefaultBorderValue()

)

复制代码

java

public static void erode(Mat src, Mat dst, Mat kernel)

{

erode_4(src.nativeObj, dst.nativeObj, kernel.nativeObj);

return;

}

// C++: void cv::erode(Mat src, Mat& dst, Mat kernel, Point anchor = Point(-1,-1), int iterations = 1, int borderType = BORDER_CONSTANT, Scalar borderValue = morphologyDefaultBorderValue())

private static native void erode_0(long src_nativeObj, long dst_nativeObj, long kernel_nativeObj, double anchor_x, double anchor_y, int iterations, int borderType, double borderValue_val0, double borderValue_val1, double borderValue_val2, double borderValue_val3);

private static native void erode_1(long src_nativeObj, long dst_nativeObj, long kernel_nativeObj, double anchor_x, double anchor_y, int iterations, int borderType);

private static native void erode_2(long src_nativeObj, long dst_nativeObj, long kernel_nativeObj, double anchor_x, double anchor_y, int iterations);

private static native void erode_3(long src_nativeObj, long dst_nativeObj, long kernel_nativeObj, double anchor_x, double anchor_y);

private static native void erode_4(long src_nativeObj, long dst_nativeObj, long kernel_nativeObj);

复制代码

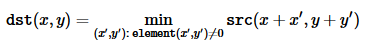

使用指定的 structuring element 腐蚀图像,structuring element用来确定采用最小值的像素邻域的形状。

该方法支持就地模式。腐蚀可以多次(迭代)应用。对于多通道图像,每个通道被单独处理。

- src:输入图像,通道数可以是任意的,但是 depth 需要是 CV_8U,CV_16U,CV_16S,CV_32F,CV64F 中的一种。

- dst:输出图像,和输入图像具有相同的大小和类型。

- kernel:腐蚀用到的 structuring element,如果 element = Mat(),将会使用 3x3 的矩形 structuring element。 kernel 可以使用 getStructuringElement 方法创建。

- anchor:锚点,默认是Point(-1,-1),表示锚点位于核的中心。

- iterations:膨胀应用的次数。

- borderType:图像边界外像素值的外推模式。

- borderValue:当边界为常量时的边界值。

filter2D

方法声明:

c++

void cv::filter2D ( InputArray src,

OutputArray dst,

int ddepth,

InputArray kernel,

Point anchor = Point(-1,-1),

double delta = 0,

int borderType = BORDER_DEFAULT

)

复制代码

java

public static void sepFilter2D(Mat src, Mat dst, int ddepth, Mat kernelX, Mat kernelY)

{

sepFilter2D_3(src.nativeObj, dst.nativeObj, ddepth, kernelX.nativeObj, kernelY.nativeObj);

return;

}

// C++: void cv::sepFilter2D(Mat src, Mat& dst, int ddepth, Mat kernelX, Mat kernelY, Point anchor = Point(-1,-1), double delta = 0, int borderType = BORDER_DEFAULT)

private static native void sepFilter2D_0(long src_nativeObj, long dst_nativeObj, int ddepth, long kernelX_nativeObj, long kernelY_nativeObj, double anchor_x, double anchor_y, double delta, int borderType);

private static native void sepFilter2D_1(long src_nativeObj, long dst_nativeObj, int ddepth, long kernelX_nativeObj, long kernelY_nativeObj, double anchor_x, double anchor_y, double delta);

private static native void sepFilter2D_2(long src_nativeObj, long dst_nativeObj, int ddepth, long kernelX_nativeObj, long kernelY_nativeObj, double anchor_x, double anchor_y);

private static native void sepFilter2D_3(long src_nativeObj, long dst_nativeObj, int ddepth, long kernelX_nativeObj, long kernelY_nativeObj);

复制代码

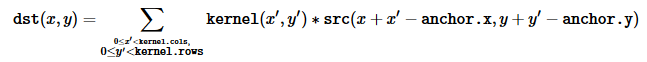

使用核(kernel)对图像进行卷积。

该方法可以对图像应用任意的线性滤波器,支持就地操作,当滤波范围部分超出图像时,该方法根据指定的像素外推模式向边界外像素插入值。

该方法实际上时计算的相关性,不是卷积,也就是说,内核不是围绕锚点镜像的,如果需要真正进行卷积,则使用 flip 对内核进行反转,并设置新的锚点为 (kernel.cols - anchor.x - 1, kernel.rows - anchor.y - 1)

:

针对特别大的核(11x11,或者更大),该方法使用基于 DFT-based 的算法;对于小核使用直接算法。

- src:输入图像

- dst:输出图像,核源图像具有相同的大小和通道数。

- ddepth:输出图像需要的depth。

- kernel:卷积核(或者说是相关核),单通道浮点型矩阵,如果向对不同的通道使用不同的kernel,使用split将图像分割成不同的颜色位面,并分别处理。

- anchor:内核锚点,指明内核过滤点的相对位置,锚点应该位于内核中,默认值是(-1,-1),表示锚点位于核的中心。

- delta:可选值,在存储到dst之前添加到过滤之后的像素值上。

- borderType:图像边界外像素值的外推模式。

GaussianBlur

方法声明:

c++

void cv::GaussianBlur ( InputArray src,

OutputArray dst,

Size ksize,

double sigmaX,

double sigmaY = 0,

int borderType = BORDER_DEFAULT

)

复制代码

java

public static void GaussianBlur(Mat src, Mat dst, Size ksize, double sigmaX, double sigmaY, int borderType)

{

GaussianBlur_0(src.nativeObj, dst.nativeObj, ksize.width, ksize.height, sigmaX, sigmaY, borderType);

return;

}

// C++: void cv::GaussianBlur(Mat src, Mat& dst, Size ksize, double sigmaX, double sigmaY = 0, int borderType = BORDER_DEFAULT)

private static native void GaussianBlur_0(long src_nativeObj, long dst_nativeObj, double ksize_width, double ksize_height, double sigmaX, double sigmaY, int borderType);

private static native void GaussianBlur_1(long src_nativeObj, long dst_nativeObj, double ksize_width, double ksize_height, double sigmaX, double sigmaY);

private static native void GaussianBlur_2(long src_nativeObj, long dst_nativeObj, double ksize_width, double ksize_height, double sigmaX);

复制代码

使用高斯滤波器模糊图像,使用高斯内核与图像做卷积,支持就地模式。

- src:输入图像,通道数可以是任意的,但是 depth 需要是 CV_8U,CV_16U,CV_16S,CV_32F,CV64F 中的一种。

- dst:输出图像,核源图像具有相同的大小和类型。

- ksize:高斯内核的大小,ksize的宽高可以不同,但是必须是正奇数。或者,也可以为零,然后通过sigma计算。

- sigmaX:高斯核X方向的标准差。

- sigmaY:高斯核Y方向的标准差。如果sigmaY为0,则会被设置为与sigmaX相同;如果两个sigma都是0,则通过ksize的宽和高各自进行计算。如果为了完全控制结果,而不考虑将来可能对所有这些语义进行的修改,建议指定所有的ksize、sigmaX和sigmaY。

- borderType:图像边界外像素值的外推模式。

getGaborKernel()

方法声明:

c++

Mat cv::getGaborKernel ( Size ksize,

double sigma,

double theta,

double lambd,

double gamma,

double psi = CV_PI *0.5,

int ktype = CV_64F

)

复制代码

java

//javadoc: getGaborKernel(ksize, sigma, theta, lambd, gamma, psi, ktype)

public static Mat getGaborKernel(Size ksize, double sigma, double theta, double lambd, double gamma, double psi, int ktype)

{

Mat retVal = new Mat(getGaborKernel_0(ksize.width, ksize.height, sigma, theta, lambd, gamma, psi, ktype));

return retVal;

}

//javadoc: getGaborKernel(ksize, sigma, theta, lambd, gamma, psi)

public static Mat getGaborKernel(Size ksize, double sigma, double theta, double lambd, double gamma, double psi)

{

Mat retVal = new Mat(getGaborKernel_1(ksize.width, ksize.height, sigma, theta, lambd, gamma, psi));

return retVal;

}

//javadoc: getGaborKernel(ksize, sigma, theta, lambd, gamma)

public static Mat getGaborKernel(Size ksize, double sigma, double theta, double lambd, double gamma)

{

Mat retVal = new Mat(getGaborKernel_2(ksize.width, ksize.height, sigma, theta, lambd, gamma));

return retVal;

}

// C++: Mat cv::getGaborKernel(Size ksize, double sigma, double theta, double lambd, double gamma, double psi = CV_PI*0.5, int ktype = CV_64F)

private static native long getGaborKernel_0(double ksize_width, double ksize_height, double sigma, double theta, double lambd, double gamma, double psi, int ktype);

private static native long getGaborKernel_1(double ksize_width, double ksize_height, double sigma, double theta, double lambd, double gamma, double psi);

private static native long getGaborKernel_2(double ksize_width, double ksize_height, double sigma, double theta, double lambd, double gamma);

复制代码

返回一个 Gabor 滤波器系数,详细的 gabor 滤波器参见Gabor Filter

- ksize:返回的滤波器大小。

- sigma:高斯包络线的标准差。

- theta:Gabor函数的法线到平行条纹的方向。

- lambd:正弦因子的波长。

- gamma:空间长宽比。

- psi:相偏移。

- ktype:滤波器系数的类型,可以是 CV_32F 或者 CV_64F。

getGaussianKernel()

方法声明:

c++

Mat cv::getGaussianKernel ( int ksize,

double sigma,

int ktype = CV_64F

)

复制代码

java

//javadoc: getGaussianKernel(ksize, sigma, ktype)

public static Mat getGaussianKernel(int ksize, double sigma, int ktype)

{

Mat retVal = new Mat(getGaussianKernel_0(ksize, sigma, ktype));

return retVal;

}

//javadoc: getGaussianKernel(ksize, sigma)

public static Mat getGaussianKernel(int ksize, double sigma)

{

Mat retVal = new Mat(getGaussianKernel_1(ksize, sigma));

return retVal;

}

// C++: Mat cv::getGaussianKernel(int ksize, double sigma, int ktype = CV_64F)

private static native long getGaussianKernel_0(int ksize, double sigma, int ktype);

private static native long getGaussianKernel_1(int ksize, double sigma);

复制代码

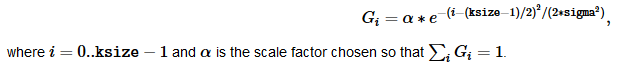

返回一个高斯滤波器系数。

该方法计算并返回ksize×1高斯滤波器系数矩阵:

生成的两种kernel都可以传递给 sepFilter2D,方法会自动识别 平滑kernel(对称,权重和为1),然后相应的处理。也可以使用相对高层次的高斯模糊(GaoussianBlur)。

sigma = 0.3*((ksize-1)*0.5 - 1) + 0.8

getStructuringElement()

方法声明:

c++

Mat cv::getStructuringElement ( int shape,

Size ksize,

Point anchor = Point(-1,-1)

)

复制代码

java

public static Mat getStructuringElement(int shape, Size ksize)

{

Mat retVal = new Mat(getStructuringElement_1(shape, ksize.width, ksize.height));

return retVal;

}

// C++: Mat cv::getStructuringElement(int shape, Size ksize, Point anchor = Point(-1,-1))

private static native long getStructuringElement_0(int shape, double ksize_width, double ksize_height, double anchor_x, double anchor_y);

private static native long getStructuringElement_1(int shape, double ksize_width, double ksize_height);

复制代码

为形态操作返回指定大小和形状的structuring element,进一步传递给 erode,dilate,morphologyEx。但是也可以自己构造一个任意的二进制掩码,并将其用作structuring element。

- shape:元素的形状,可以是MorphShape的一种。

- ksize:structuring element 的大小。

- anchor:element里的锚点,默认值是(-1,-1),表示锚点位于中心。需要注意的是只有交叉形状的元素的形状取决于锚点的位置,否则的话锚点只是调节形态操作的结果移动了多少。

拉普拉斯算子

方法声明:

c++

void cv::Laplacian ( InputArray src,

OutputArray dst,

int ddepth,

int ksize = 1,

double scale = 1,

double delta = 0,

int borderType = BORDER_DEFAULT

)

复制代码

java

public static void Laplacian(Mat src, Mat dst, int ddepth)

{

Laplacian_4(src.nativeObj, dst.nativeObj, ddepth);

return;

}

private static native void Laplacian_0(long src_nativeObj, long dst_nativeObj, int ddepth, int ksize, double scale, double delta, int borderType);

private static native void Laplacian_1(long src_nativeObj, long dst_nativeObj, int ddepth, int ksize, double scale, double delta);

private static native void Laplacian_2(long src_nativeObj, long dst_nativeObj, int ddepth, int ksize, double scale);

private static native void Laplacian_3(long src_nativeObj, long dst_nativeObj, int ddepth, int ksize);

private static native void Laplacian_4(long src_nativeObj, long dst_nativeObj, int ddepth);

复制代码

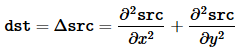

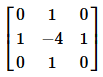

该方法通过Sobel算子计算源图像二阶x、y导数,然后相加得出源图像的拉普拉斯算子:

需要注意的是,当 ksize>1 时用上述公式计算拉普拉斯算子;当 ksize=1 时,拉普拉斯算子通过使用3x3的窗口对图片进行滤波得到。

- src:源图像。

- dst:输出图像,和源图像具有相同的大小和通道数。

- ddepth:输出图像的depth。

- ksize:计算二阶导数滤波器的窗口大小,值必须是正奇数。

- scale:可选参数,计算拉普拉斯值的缩放因子,默认没有缩放。

- delta:可选参数,在计算结果存储到输出图像之前,添加到结果的delta值。

- borderType:图像边界外像素值的外推模式。

中值模糊

方法声明:

c++

void cv::medianBlur ( InputArray src,

OutputArray dst,

int ksize

)

复制代码

java

public static void medianBlur(Mat src, Mat dst, int ksize)

{

medianBlur_0(src.nativeObj, dst.nativeObj, ksize);

return;

}

private static native void medianBlur_0(long src_nativeObj, long dst_nativeObj, int ksize);

复制代码

使用ksize X ksize 大小的窗口的中值滤波器对图像做平滑处理,图像的各个通道单独处理。该方法支持就地操作。

中值滤波器内部使用 BORDER_REPLICATE 的外推模式复制边界像素。

- src:输入图像,1-,3-,4-通道;当ksize=3,或者ksize=5时,图像的depth可以是CV_8U、CV_16U、CV_32F,如果ksize更大,depth应该只用CV_8U 。

- dest:输出图像,和源图像具有相同的大小和类型。

- ksize:窗口线性大小,必须是大于1的奇数。

morphologyDefaultBorderValue()

方法声明

c++

static Scalar cv::morphologyDefaultBorderValue() 复制代码

java 中没有直接调用该方法的接口,使用膨胀、腐蚀操作时,内部自动调用c++方法对相应参数进行赋值。

返回图像膨胀和腐蚀的 magic 边界值。当膨胀时,会自动转换为 Scalar::all(-DBL_MAX)。

morphologyEx

方法声明:

c++

void cv::morphologyEx ( InputArray src,

OutputArray dst,

int op,

InputArray kernel,

Point anchor = Point(-1,-1),

int iterations = 1,

int borderType = BORDER_CONSTANT,

const Scalar & borderValue = morphologyDefaultBorderValue()

)

复制代码

java

//javadoc: morphologyEx(src, dst, op, kernel, anchor, iterations, borderType, borderValue)

public static void morphologyEx(Mat src, Mat dst, int op, Mat kernel, Point anchor, int iterations, int borderType, Scalar borderValue)

{

morphologyEx_0(src.nativeObj, dst.nativeObj, op, kernel.nativeObj, anchor.x, anchor.y, iterations, borderType, borderValue.val[0], borderValue.val[1], borderValue.val[2], borderValue.val[3]);

return;

}

//javadoc: morphologyEx(src, dst, op, kernel, anchor, iterations, borderType)

public static void morphologyEx(Mat src, Mat dst, int op, Mat kernel, Point anchor, int iterations, int borderType)

{

morphologyEx_1(src.nativeObj, dst.nativeObj, op, kernel.nativeObj, anchor.x, anchor.y, iterations, borderType);

return;

}

//javadoc: morphologyEx(src, dst, op, kernel, anchor, iterations)

public static void morphologyEx(Mat src, Mat dst, int op, Mat kernel, Point anchor, int iterations)

{

morphologyEx_2(src.nativeObj, dst.nativeObj, op, kernel.nativeObj, anchor.x, anchor.y, iterations);

return;

}

//javadoc: morphologyEx(src, dst, op, kernel, anchor)

public static void morphologyEx(Mat src, Mat dst, int op, Mat kernel, Point anchor)

{

morphologyEx_3(src.nativeObj, dst.nativeObj, op, kernel.nativeObj, anchor.x, anchor.y);

return;

}

//javadoc: morphologyEx(src, dst, op, kernel)

public static void morphologyEx(Mat src, Mat dst, int op, Mat kernel)

{

morphologyEx_4(src.nativeObj, dst.nativeObj, op, kernel.nativeObj);

return;

}

// C++: void cv::morphologyEx(Mat src, Mat& dst, int op, Mat kernel, Point anchor = Point(-1,-1), int iterations = 1, int borderType = BORDER_CONSTANT, Scalar borderValue = morphologyDefaultBorderValue())

private static native void morphologyEx_0(long src_nativeObj, long dst_nativeObj, int op, long kernel_nativeObj, double anchor_x, double anchor_y, int iterations, int borderType, double borderValue_val0, double borderValue_val1, double borderValue_val2, double borderValue_val3);

private static native void morphologyEx_1(long src_nativeObj, long dst_nativeObj, int op, long kernel_nativeObj, double anchor_x, double anchor_y, int iterations, int borderType);

private static native void morphologyEx_2(long src_nativeObj, long dst_nativeObj, int op, long kernel_nativeObj, double anchor_x, double anchor_y, int iterations);

private static native void morphologyEx_3(long src_nativeObj, long dst_nativeObj, int op, long kernel_nativeObj, double anchor_x, double anchor_y);

private static native void morphologyEx_4(long src_nativeObj, long dst_nativeObj, int op, long kernel_nativeObj);

复制代码

基于膨胀、腐蚀对图像做增强形态变换。

所有操作都可以就地进行,多个通道会被独立处理。

- src:源图像,通道数可以是任意的,depth可以是CV_8U、CV_16U、CV_16S、CV_32F、CV_64F。

- dst:输出图像,和原图像具有相同的大小和类型。

- op:形态运算的类型。

- kernel:Structuring element,可以通过getStructuringElement创建。

- anchor:锚点位置。负值表示锚点位于kenel中心。

- iterations:膨胀、腐蚀操作应用的次数。

- borderType:图像边界外像素值的外推模式。

- borderValue:静态边界时的边界值,默认值有特殊的含义。

需要注意的是,iteration 的值表示的是腐蚀、膨胀应用的次数,例如图像的开操作,iteration是2,等价于 erode -> erode -> dilate -> dilate,而不是 erode -> dilate -> erode -> dilate。

pyrDown()

方法声明:

c++

void cv::pyrDown ( InputArray src,

OutputArray dst,

const Size & dstsize = Size(),

int borderType = BORDER_DEFAULT

)

复制代码

java

public static void pyrDown(Mat src, Mat dst)

{

pyrDown_2(src.nativeObj, dst.nativeObj);

return;

}

private static native void pyrDown_0(long src_nativeObj, long dst_nativeObj, double dstsize_width, double dstsize_height, int borderType);

private static native void pyrDown_1(long src_nativeObj, long dst_nativeObj, double dstsize_width, double dstsize_height);

private static native void pyrDown_2(long src_nativeObj, long dst_nativeObj);

复制代码

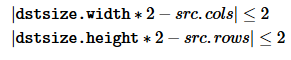

使用向下采样模糊图像。

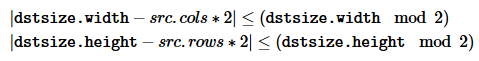

默认情况下,输出图像的大小为 Size((src.cols+1)/2, (src.rows+1)/2)

。但是如论什么时候都要满足如下条件:

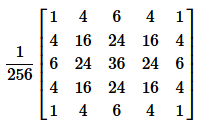

该方法为创建图像的高斯金字塔执行向下采样的步骤。

首先使用如下kernel对图像进行卷积

然后,舍弃偶数行和列来对图像进行下采样。

- src:输入图像。

- dst:输出图像,大小为指定的值,类型和源图像相同。

- dstsize:输出图像的大小。

- borderType:图像边界外像素值的外推模式,不支持BORDER_CONSTANT 模式。

pyrMeanShiftFiltering()

方法声明:

c++

void cv::pyrMeanShiftFiltering ( InputArray src,

OutputArray dst,

double sp,

double sr,

int maxLevel = 1,

TermCriteria termcrit = TermCriteria(TermCriteria::MAX_ITER+TermCriteria::EPS, 5, 1)

)

复制代码

java

//javadoc: pyrMeanShiftFiltering(src, dst, sp, sr, maxLevel, termcrit)

public static void pyrMeanShiftFiltering(Mat src, Mat dst, double sp, double sr, int maxLevel, TermCriteria termcrit)

{

pyrMeanShiftFiltering_0(src.nativeObj, dst.nativeObj, sp, sr, maxLevel, termcrit.type, termcrit.maxCount, termcrit.epsilon);

return;

}

//javadoc: pyrMeanShiftFiltering(src, dst, sp, sr, maxLevel)

public static void pyrMeanShiftFiltering(Mat src, Mat dst, double sp, double sr, int maxLevel)

{

pyrMeanShiftFiltering_1(src.nativeObj, dst.nativeObj, sp, sr, maxLevel);

return;

}

//javadoc: pyrMeanShiftFiltering(src, dst, sp, sr)

public static void pyrMeanShiftFiltering(Mat src, Mat dst, double sp, double sr)

{

pyrMeanShiftFiltering_2(src.nativeObj, dst.nativeObj, sp, sr);

return;

}

private static native void pyrMeanShiftFiltering_0(long src_nativeObj, long dst_nativeObj, double sp, double sr, int maxLevel, int termcrit_type, int termcrit_maxCount, double termcrit_epsilon);

private static native void pyrMeanShiftFiltering_1(long src_nativeObj, long dst_nativeObj, double sp, double sr, int maxLevel);

private static native void pyrMeanShiftFiltering_2(long src_nativeObj, long dst_nativeObj, double sp, double sr);

复制代码

执行图像 meanshift分割的滤波阶段。

这个函数严格来说并不是图像的分割,而是图像在色彩层面的平滑滤波,它可以中和色彩分布相近的颜色,平滑色彩细节,侵蚀掉面积较小的颜色区域。(引自: Opencv均值漂移pyrMeanShiftFiltering彩色图像分割流程剖析 )

能够去除局部相似的纹理,同时保留边缘等差异较大的特征。(引自: 学习OpenCV2——MeanShift之图形分割 )

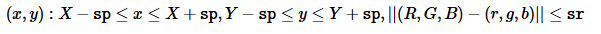

对于每个像素(x,y),该方法迭代的进行meanshift,像素的邻域不仅考虑空间,还考虑色彩,即从 空间-色彩 的超空间中选取像素的邻域。

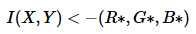

其中,可以不是 (R,G,B) 颜色空间,只要是3分量构成的颜色空间即可。

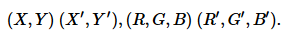

在邻域中找到平均空间值(X',Y')和平均颜色向量(R',G',B'),作为下一个迭代的邻域中心:

迭代结束后,初始像素的颜色分量(即迭代开始的像素)设置为最终值(最后一次迭代的平均颜色):

在图像高斯金字塔上,当maxLevel>0时,上述过程最先从maxLevel+1图层开始。计算结束后,结果传递给大一级的图层,并只在与上层图层相比,颜色差异大于sr的像素上再次运行迭代,能够使得颜色区域的边界更加清晰。需要注意的是,在金字塔上运行的结果与对整个原始图像(即maxLevel==0时)直接运行meanshift过程得到的结果不同。

- src:输入图像,8-bit,3通道的图像。

- dst:输出图像,和源图像具有相同的大小和格式。

- sp:空间窗口半径。

- sr:颜色窗口半径。

- maxLevel:待分割的金字塔的最大层级。

- termcrit:结束条件。

pyrUp()

方法声明:

c++

void cv::pyrUp ( InputArray src,

OutputArray dst,

const Size & dstsize = Size(),

int borderType = BORDER_DEFAULT

)

复制代码

java

//javadoc: pyrUp(src, dst, dstsize, borderType)

public static void pyrUp(Mat src, Mat dst, Size dstsize, int borderType)

{

pyrUp_0(src.nativeObj, dst.nativeObj, dstsize.width, dstsize.height, borderType);

return;

}

//javadoc: pyrUp(src, dst, dstsize)

public static void pyrUp(Mat src, Mat dst, Size dstsize)

{

pyrUp_1(src.nativeObj, dst.nativeObj, dstsize.width, dstsize.height);

return;

}

//javadoc: pyrUp(src, dst)

public static void pyrUp(Mat src, Mat dst)

{

pyrUp_2(src.nativeObj, dst.nativeObj);

return;

}

// C++: void cv::pyrUp(Mat src, Mat& dst, Size dstsize = Size(), int borderType = BORDER_DEFAULT)

private static native void pyrUp_0(long src_nativeObj, long dst_nativeObj, double dstsize_width, double dstsize_height, int borderType);

private static native void pyrUp_1(long src_nativeObj, long dst_nativeObj, double dstsize_width, double dstsize_height);

private static native void pyrUp_2(long src_nativeObj, long dst_nativeObj);

复制代码

对图像向上采样,然后进行模糊处理。

默认情况下,输出图像的大小为 Size(src.cols/*2, (src.rows/*2)

。但是,无论什么情况,都应该满足以下条件:

该方法可以执行高斯金字塔创建的向上采样步骤,也可以用于拉普拉斯金子塔的创建。

首先通过注入0值行、0值列对源图像进行向上采样,即放大图像;然后将向下采样使用的kernel乘以4,作为新kernel对进行卷积,对放大的图像进行卷积。

- src:输入图像。

- dst:输出图像,大小指定,类型和输入图像相同。

- dstsize:输出图像的大小。

- borderType:图像边界外像素值的外推模式,只支持BORDER_DEFAULT 模式。

Scharr()

方法声明:

c++

void cv::Scharr ( InputArray src,

OutputArray dst,

int ddepth,

int dx,

int dy,

double scale = 1,

double delta = 0,

int borderType = BORDER_DEFAULT

)

复制代码

java

//javadoc: Scharr(src, dst, ddepth, dx, dy, scale, delta, borderType)

public static void Scharr(Mat src, Mat dst, int ddepth, int dx, int dy, double scale, double delta, int borderType)

{

Scharr_0(src.nativeObj, dst.nativeObj, ddepth, dx, dy, scale, delta, borderType);

return;

}

//javadoc: Scharr(src, dst, ddepth, dx, dy, scale, delta)

public static void Scharr(Mat src, Mat dst, int ddepth, int dx, int dy, double scale, double delta)

{

Scharr_1(src.nativeObj, dst.nativeObj, ddepth, dx, dy, scale, delta);

return;

}

//javadoc: Scharr(src, dst, ddepth, dx, dy, scale)

public static void Scharr(Mat src, Mat dst, int ddepth, int dx, int dy, double scale)

{

Scharr_2(src.nativeObj, dst.nativeObj, ddepth, dx, dy, scale);

return;

}

//javadoc: Scharr(src, dst, ddepth, dx, dy)

public static void Scharr(Mat src, Mat dst, int ddepth, int dx, int dy)

{

Scharr_3(src.nativeObj, dst.nativeObj, ddepth, dx, dy);

return;

}

// C++: void cv::Scharr(Mat src, Mat& dst, int ddepth, int dx, int dy, double scale = 1, double delta = 0, int borderType = BORDER_DEFAULT)

private static native void Scharr_0(long src_nativeObj, long dst_nativeObj, int ddepth, int dx, int dy, double scale, double delta, int borderType);

private static native void Scharr_1(long src_nativeObj, long dst_nativeObj, int ddepth, int dx, int dy, double scale, double delta);

private static native void Scharr_2(long src_nativeObj, long dst_nativeObj, int ddepth, int dx, int dy, double scale);

private static native void Scharr_3(long src_nativeObj, long dst_nativeObj, int ddepth, int dx, int dy);

复制代码

使用 Scharr 运算计算图像的x-或者y-的一阶导数。调用方法 Scharr(src, dst, ddepth, dx, dy, scale, delta, borderType)

等价于调用方法 Sobel(src, dst, ddepth, dx, dy, CV_SCHARR, scale, delta, borderType)

- src:输入图像。

- dst:输出图像,和输入图像具有相同的大小和通道数。

- ddepth:输出图像的depth。

- dx:x的导数阶数。

- dy:y的导数阶数。

- scale:可选参数,计算导数的缩放因子,默认不缩放。

- delta:可选参数,计算结果保存到输出图像之前,添加到计算结果的值delta。

- borderType:图像边界外像素值的外推模式。

sepFilter2D()

方法声明:

c++

void cv::sepFilter2D ( InputArray src,

OutputArray dst,

int ddepth,

InputArray kernelX,

InputArray kernelY,

Point anchor = Point(-1,-1),

double delta = 0,

int borderType = BORDER_DEFAULT

)

复制代码

java

//javadoc: sepFilter2D(src, dst, ddepth, kernelX, kernelY, anchor, delta, borderType)

public static void sepFilter2D(Mat src, Mat dst, int ddepth, Mat kernelX, Mat kernelY, Point anchor, double delta, int borderType)

{

sepFilter2D_0(src.nativeObj, dst.nativeObj, ddepth, kernelX.nativeObj, kernelY.nativeObj, anchor.x, anchor.y, delta, borderType);

return;

}

//javadoc: sepFilter2D(src, dst, ddepth, kernelX, kernelY, anchor, delta)

public static void sepFilter2D(Mat src, Mat dst, int ddepth, Mat kernelX, Mat kernelY, Point anchor, double delta)

{

sepFilter2D_1(src.nativeObj, dst.nativeObj, ddepth, kernelX.nativeObj, kernelY.nativeObj, anchor.x, anchor.y, delta);

return;

}

//javadoc: sepFilter2D(src, dst, ddepth, kernelX, kernelY, anchor)

public static void sepFilter2D(Mat src, Mat dst, int ddepth, Mat kernelX, Mat kernelY, Point anchor)

{

sepFilter2D_2(src.nativeObj, dst.nativeObj, ddepth, kernelX.nativeObj, kernelY.nativeObj, anchor.x, anchor.y);

return;

}

//javadoc: sepFilter2D(src, dst, ddepth, kernelX, kernelY)

public static void sepFilter2D(Mat src, Mat dst, int ddepth, Mat kernelX, Mat kernelY)

{

sepFilter2D_3(src.nativeObj, dst.nativeObj, ddepth, kernelX.nativeObj, kernelY.nativeObj);

return;

}

// C++: void cv::sepFilter2D(Mat src, Mat& dst, int ddepth, Mat kernelX, Mat kernelY, Point anchor = Point(-1,-1), double delta = 0, int borderType = BORDER_DEFAULT)

private static native void sepFilter2D_0(long src_nativeObj, long dst_nativeObj, int ddepth, long kernelX_nativeObj, long kernelY_nativeObj, double anchor_x, double anchor_y, double delta, int borderType);

private static native void sepFilter2D_1(long src_nativeObj, long dst_nativeObj, int ddepth, long kernelX_nativeObj, long kernelY_nativeObj, double anchor_x, double anchor_y, double delta);

private static native void sepFilter2D_2(long src_nativeObj, long dst_nativeObj, int ddepth, long kernelX_nativeObj, long kernelY_nativeObj, double anchor_x, double anchor_y);

private static native void sepFilter2D_3(long src_nativeObj, long dst_nativeObj, int ddepth, long kernelX_nativeObj, long kernelY_nativeObj);

复制代码

对图像使用可分离的线性滤波器。首先使用一维kernel(kernelX)对源图像的每一行进行过滤;然后使用一维kernel(kernelY)对结果的每一列进行过滤;最后根据delta对结果进行位移,存储在输出图像中。

- src:输入图像;

- dst:输出图像,和输入图像具有相同的大小和通道数。

- ddepth:输出图像的depth。

- kernelX:对每一行进行过滤的系数。

- kernelY:对每一列进行过滤的系数。

- anchor:kernel锚点位置。默认值(-1,-1)表示锚点位于kernel中心。

- delta:结果存储前添加的值。

- borderType:图像边界外像素值的外推模式。

Sobel()

方法声明:

c++

void cv::Sobel ( InputArray src,

OutputArray dst,

int ddepth,

int dx,

int dy,

int ksize = 3,

double scale = 1,

double delta = 0,

int borderType = BORDER_DEFAULT

)

复制代码

java

//javadoc: Sobel(src, dst, ddepth, dx, dy, ksize, scale, delta, borderType)

public static void Sobel(Mat src, Mat dst, int ddepth, int dx, int dy, int ksize, double scale, double delta, int borderType)

{

Sobel_0(src.nativeObj, dst.nativeObj, ddepth, dx, dy, ksize, scale, delta, borderType);

return;

}

//javadoc: Sobel(src, dst, ddepth, dx, dy, ksize, scale, delta)

public static void Sobel(Mat src, Mat dst, int ddepth, int dx, int dy, int ksize, double scale, double delta)

{

Sobel_1(src.nativeObj, dst.nativeObj, ddepth, dx, dy, ksize, scale, delta);

return;

}

//javadoc: Sobel(src, dst, ddepth, dx, dy, ksize, scale)

public static void Sobel(Mat src, Mat dst, int ddepth, int dx, int dy, int ksize, double scale)

{

Sobel_2(src.nativeObj, dst.nativeObj, ddepth, dx, dy, ksize, scale);

return;

}

//javadoc: Sobel(src, dst, ddepth, dx, dy, ksize)

public static void Sobel(Mat src, Mat dst, int ddepth, int dx, int dy, int ksize)

{

Sobel_3(src.nativeObj, dst.nativeObj, ddepth, dx, dy, ksize);

return;

}

//javadoc: Sobel(src, dst, ddepth, dx, dy)

public static void Sobel(Mat src, Mat dst, int ddepth, int dx, int dy)

{

Sobel_4(src.nativeObj, dst.nativeObj, ddepth, dx, dy);

return;

}

// C++: void cv::Sobel(Mat src, Mat& dst, int ddepth, int dx, int dy, int ksize = 3, double scale = 1, double delta = 0, int borderType = BORDER_DEFAULT)

private static native void Sobel_0(long src_nativeObj, long dst_nativeObj, int ddepth, int dx, int dy, int ksize, double scale, double delta, int borderType);

private static native void Sobel_1(long src_nativeObj, long dst_nativeObj, int ddepth, int dx, int dy, int ksize, double scale, double delta);

private static native void Sobel_2(long src_nativeObj, long dst_nativeObj, int ddepth, int dx, int dy, int ksize, double scale);

private static native void Sobel_3(long src_nativeObj, long dst_nativeObj, int ddepth, int dx, int dy, int ksize);

private static native void Sobel_4(long src_nativeObj, long dst_nativeObj, int ddepth, int dx, int dy);

复制代码

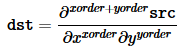

使用扩展sobel运算计算图像的一阶、二阶、三阶、混合导数。

在所有情况下,只有一个除外,ksize×ksize分离内核用于计算导数。当ksize = 1,3×1或1×3内核使用(也就是说,没有进行高斯平滑)。ksize = 1只能用于x或y的二阶导数。

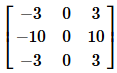

另外还有一个特殊值,当 ksize = CV_SCHARR (-1)

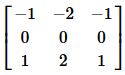

时,相应的3x3 Scharr滤波器可能比 3x3的sobel滤波器更准确。对于x导数和y导数的转置,Schar窗口如下:

该方法通过将图像与适当的kernel卷积来计算图像的导数:

Sobel算子结合了高斯平滑和微分,所以结果或多或少能抵抗噪声。

通常,函数被调用(xorder = 1, yorder = 0, ksize = 3)或(xorder = 0, yorder = 1, ksize = 3)来计算第一个x或y图像的导数。

第一种情况的kernel:

第二种情况的kernel:

- src:输入图像。

- dst:输出图像,与输入图像具有相同的大小核通道数。

- ddepth:输出图像的depth,在8位输入图像的情况下,它将导致截断的导数。

- dx:x导数的阶数。

- dy:y导数的阶数。

- ksize:扩展sobel kernel的大小,必须是正奇数。

- scale:可选参数,计算导数值的缩放因子,默认无缩放。

- delta:结果存储前添加的值。

- borderType:图像边界外像素值的外推模式。

spatialGradient()

方法声明:

c++

void cv::spatialGradient ( InputArray src,

OutputArray dx,

OutputArray dy,

int ksize = 3,

int borderType = BORDER_DEFAULT

)

复制代码

java

//javadoc: spatialGradient(src, dx, dy, ksize, borderType)

public static void spatialGradient(Mat src, Mat dx, Mat dy, int ksize, int borderType)

{

spatialGradient_0(src.nativeObj, dx.nativeObj, dy.nativeObj, ksize, borderType);

return;

}

//javadoc: spatialGradient(src, dx, dy, ksize)

public static void spatialGradient(Mat src, Mat dx, Mat dy, int ksize)

{

spatialGradient_1(src.nativeObj, dx.nativeObj, dy.nativeObj, ksize);

return;

}

//javadoc: spatialGradient(src, dx, dy)

public static void spatialGradient(Mat src, Mat dx, Mat dy)

{

spatialGradient_2(src.nativeObj, dx.nativeObj, dy.nativeObj);

return;

}

// C++: void cv::spatialGradient(Mat src, Mat& dx, Mat& dy, int ksize = 3, int borderType = BORDER_DEFAULT)

private static native void spatialGradient_0(long src_nativeObj, long dx_nativeObj, long dy_nativeObj, int ksize, int borderType);

private static native void spatialGradient_1(long src_nativeObj, long dx_nativeObj, long dy_nativeObj, int ksize);

private static native void spatialGradient_2(long src_nativeObj, long dx_nativeObj, long dy_nativeObj);

复制代码

使用Sobel运算计算x和y的一阶图像导数。

等价于调用:

Sobel( src, dx, CV_16SC1, 1, 0, 3 ); Sobel( src, dy, CV_16SC1, 0, 1, 3 ); 复制代码

- src:输入图像。

- dx:输出图像的x一阶导数。

- dy:输出图像的y一阶导数。

- ksize:sobel kernel的大小,必须是3 。

- borderType:图像边界外像素值的外推模式。

sqrBoxFilter()

方法声明:

c++

void cv::sqrBoxFilter ( InputArray src,

OutputArray dst,

int ddepth,

Size ksize,

Point anchor = Point(-1, -1),

bool normalize = true,

int borderType = BORDER_DEFAULT

)

复制代码

java

//javadoc: sqrBoxFilter(_src, _dst, ddepth, ksize, anchor, normalize, borderType)

public static void sqrBoxFilter(Mat _src, Mat _dst, int ddepth, Size ksize, Point anchor, boolean normalize, int borderType)

{

sqrBoxFilter_0(_src.nativeObj, _dst.nativeObj, ddepth, ksize.width, ksize.height, anchor.x, anchor.y, normalize, borderType);

return;

}

//javadoc: sqrBoxFilter(_src, _dst, ddepth, ksize, anchor, normalize)

public static void sqrBoxFilter(Mat _src, Mat _dst, int ddepth, Size ksize, Point anchor, boolean normalize)

{

sqrBoxFilter_1(_src.nativeObj, _dst.nativeObj, ddepth, ksize.width, ksize.height, anchor.x, anchor.y, normalize);

return;

}

//javadoc: sqrBoxFilter(_src, _dst, ddepth, ksize, anchor)

public static void sqrBoxFilter(Mat _src, Mat _dst, int ddepth, Size ksize, Point anchor)

{

sqrBoxFilter_2(_src.nativeObj, _dst.nativeObj, ddepth, ksize.width, ksize.height, anchor.x, anchor.y);

return;

}

//javadoc: sqrBoxFilter(_src, _dst, ddepth, ksize)

public static void sqrBoxFilter(Mat _src, Mat _dst, int ddepth, Size ksize)

{

sqrBoxFilter_3(_src.nativeObj, _dst.nativeObj, ddepth, ksize.width, ksize.height);

return;

}

// C++: void cv::sqrBoxFilter(Mat _src, Mat& _dst, int ddepth, Size ksize, Point anchor = Point(-1, -1), bool normalize = true, int borderType = BORDER_DEFAULT)

private static native void sqrBoxFilter_0(long _src_nativeObj, long _dst_nativeObj, int ddepth, double ksize_width, double ksize_height, double anchor_x, double anchor_y, boolean normalize, int borderType);

private static native void sqrBoxFilter_1(long _src_nativeObj, long _dst_nativeObj, int ddepth, double ksize_width, double ksize_height, double anchor_x, double anchor_y, boolean normalize);

private static native void sqrBoxFilter_2(long _src_nativeObj, long _dst_nativeObj, int ddepth, double ksize_width, double ksize_height, double anchor_x, double anchor_y);

private static native void sqrBoxFilter_3(long _src_nativeObj, long _dst_nativeObj, int ddepth, double ksize_width, double ksize_height);

复制代码

计算与滤波器重叠像素值的归一化平方和。

- src:输入图像。

- dst:输出tux,与源图像具有相同的大小和类型。

- ddepth:输出图像的depth,-1表示使用src.depth()。

- ksize:核大小。

- anchor:锚点,默认值(-1,-1)表示锚点位于核中心。

- normalize:标识,指定核是否按其区域归一化。

- borderType:图像边界外像素值的外推模式。

![[HBLOG]公众号](https://www.liuhaihua.cn/img/qrcode_gzh.jpg)