java大数据最全课程学习笔记(5)--MapReduce精通(一)

目前 CSDN , 博客园 , 简书 同步发表中,更多精彩欢迎访问我的 gitee pages

目录

MapReduce精通(一)

MapReduce入门

MapReduce定义

MapReduce优缺点

优点

缺点

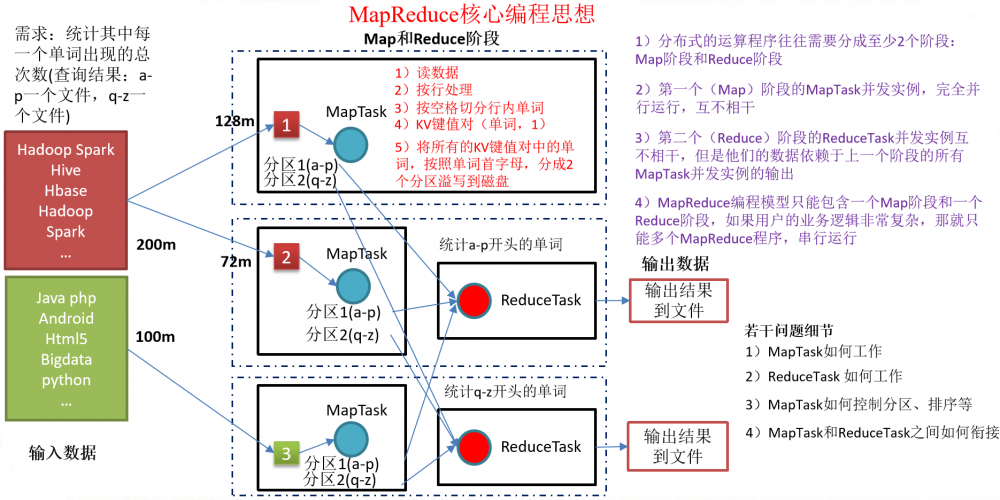

MapReduce核心思想

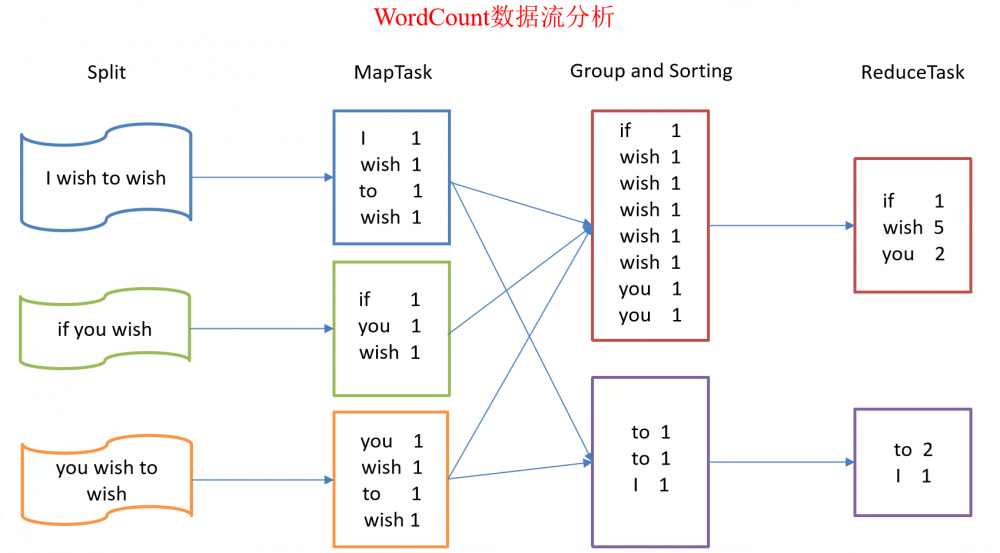

总结:分析WordCount数据流走向深入理解MapReduce核心思想。

MapReduce进程

MapReduce编程规范

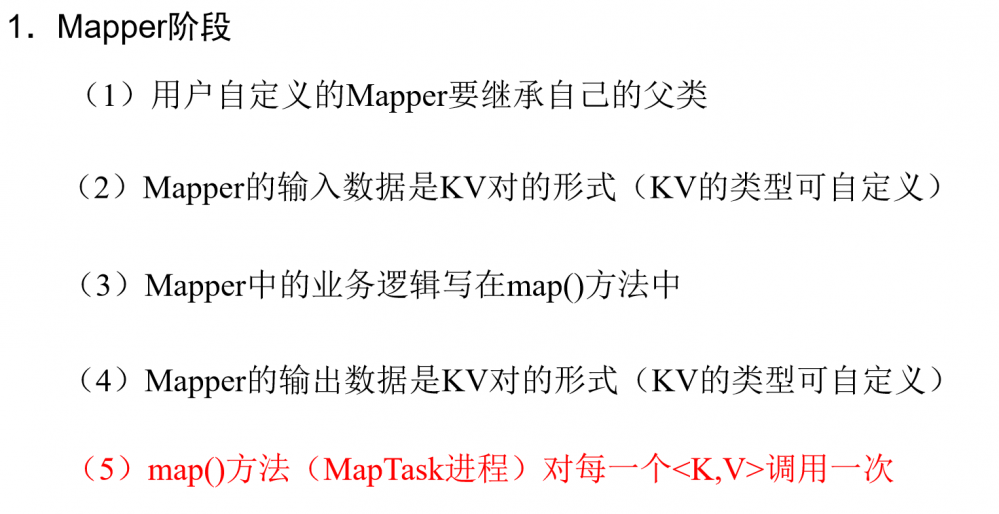

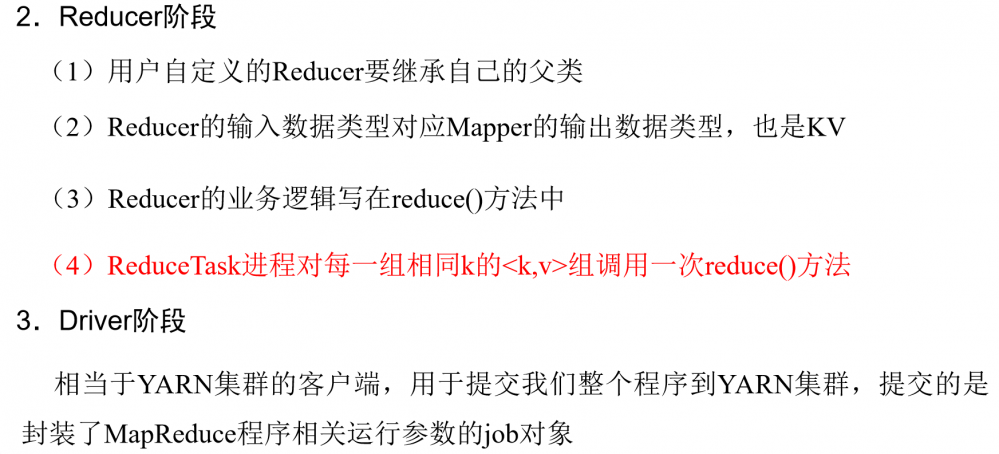

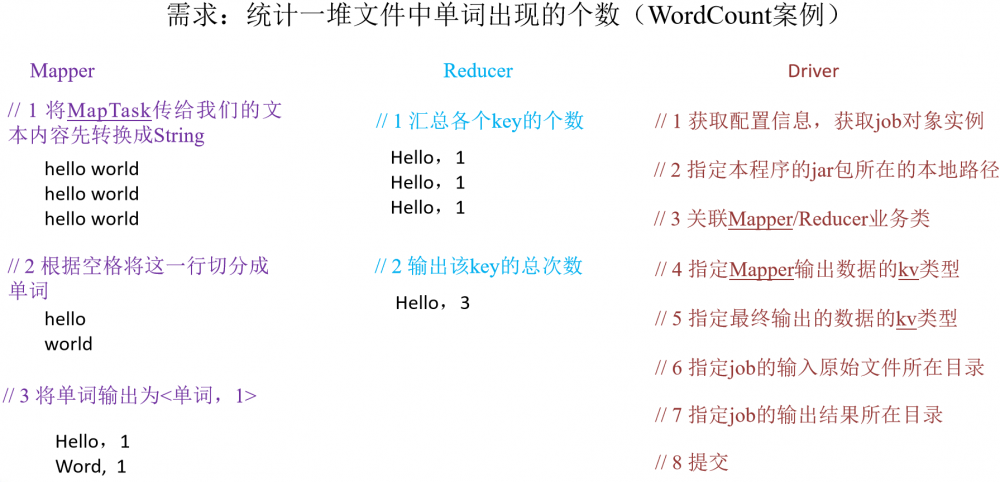

用户编写的程序分成三个部分:Mapper、Reducer和Driver。

WordCount案例实操

-

需求

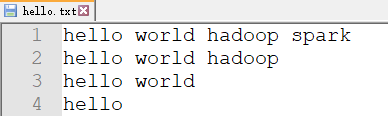

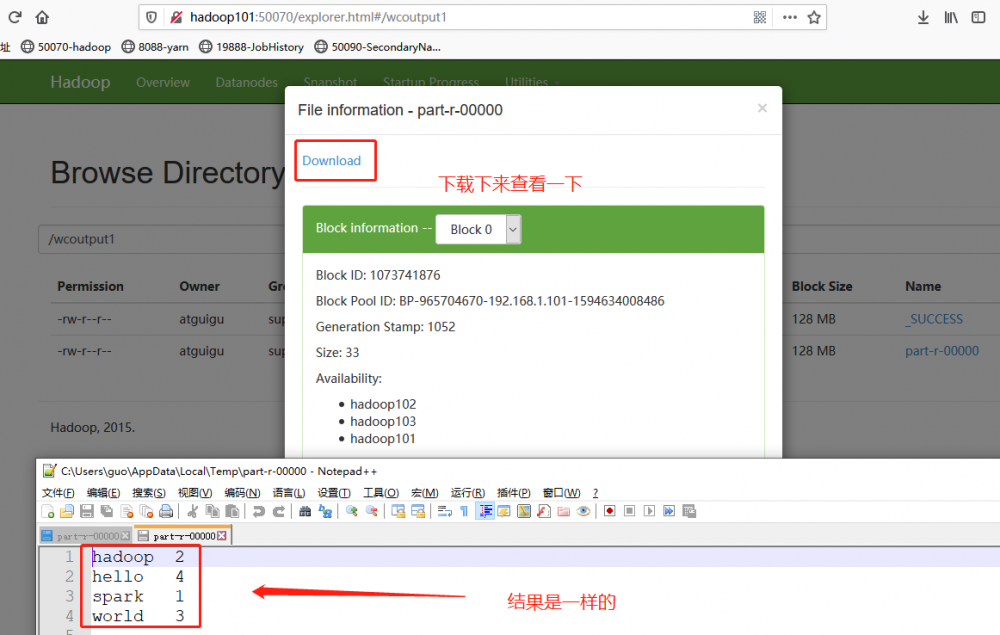

在给定的文本文件中统计输出每一个单词出现的总次数

-

输入数据

-

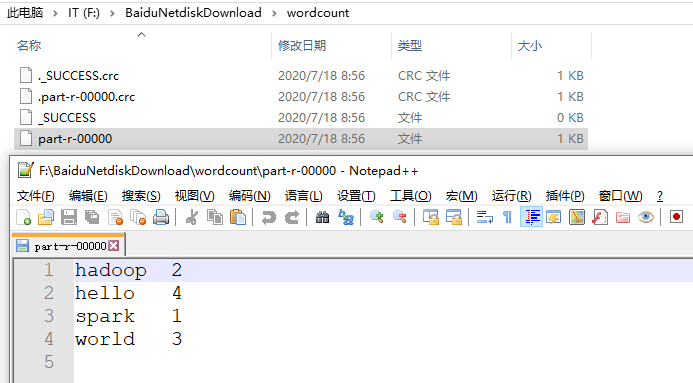

期望输出数据

hadoop 2

hello 4

spark 1

world 3

-

-

需求分析

按照MapReduce编程规范,分别编写Mapper,Reducer,Driver,如图所示。

-

环境准备

-

创建maven工程

-

在pom.xml文件中添加如下依赖

<dependencies> <dependency> <groupId>junit</groupId> <artifactId>junit</artifactId> <version>RELEASE</version> </dependency> <dependency> <groupId>org.apache.logging.log4j</groupId> <artifactId>log4j-core</artifactId> <version>2.8.2</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <version>2.7.2</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-client</artifactId> <version>2.7.2</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-hdfs</artifactId> <version>2.7.2</version> </dependency> </dependencies>

-

在项目的src/main/resources目录下,新建一个文件,命名为“log4j.properties”,在文件中填入

log4j.rootLogger=INFO, stdout log4j.appender.stdout=org.apache.log4j.ConsoleAppender log4j.appender.stdout.layout=org.apache.log4j.PatternLayout log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n log4j.appender.logfile=org.apache.log4j.FileAppender log4j.appender.logfile.File=target/spring.log log4j.appender.logfile.layout=org.apache.log4j.PatternLayout log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

-

-

编写程序

-

编写Mapper类

public class WordcountMapper extends Mapper<LongWritable, Text, Text, IntWritable{ Text k = new Text(); IntWritable v = new IntWritable(1); @Override protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { // 1 获取一行 String line = value.toString(); // 2 切割 String[] words = line.split(" "); // 3 输出 for (String word : words) { k.set(word); context.write(k, v); } } }说明:

注意:导包时,导入 org.apache.hadoop.mapreduce包下的类(2.0的新api)

-

自定义的类必须符合MR的Mapper的规范

-

在MR中,只能处理key-value格式的数据

KEYIN, VALUEIN: mapper输入的k-v类型。 由当前Job的InputFormat的RecordReader决定!封装输入的key-value由RR自动进行。

KEYOUT, VALUEOUT: mapper输出的k-v类型: 自定义

-

InputFormat的作用:

-

验证输入目录中文件格式,是否符合当前Job的要求

-

生成切片,每个切片都会交给一个MapTask处理

-

提供RecordReader,由RR从切片中读取记录,交给Mapper进行处理

方法: List

getSplits: 切片 RecordReader<K,V> createRecordReader: 创建RR

默认hadoop使用的是TextInputFormat

**TextInputFormat使用LineRecordReader** **LineRecordReader Treats keys as offset in file and value as line.**(即偏移量offset当做key,每一行当做value)

-

-

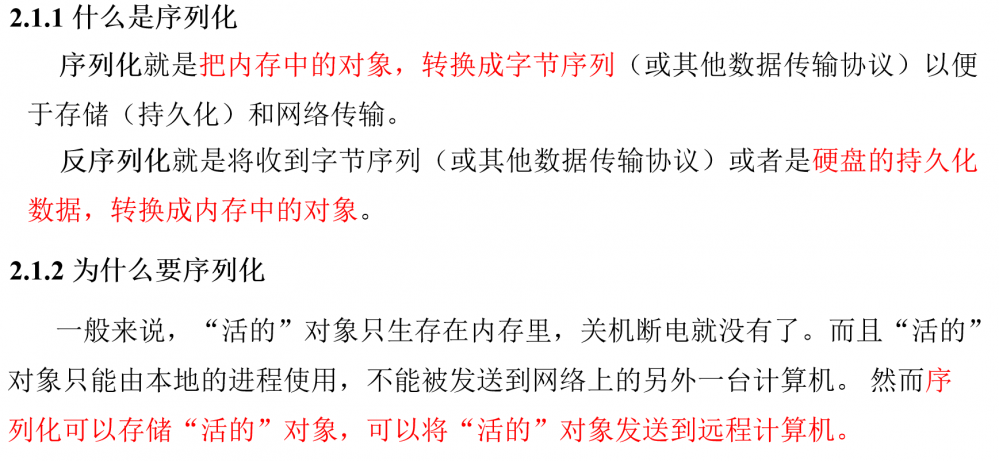

在Hadoop中,如果有Reduce阶段。通常key-value都需要实现序列化协议!

MapTask处理后的key-value,只是一个阶段性的结果!

这些key-value需要传输到ReduceTask所在的机器!

将一个对象通过序列化技术,序列化到一个文件中,经过网络传输到另外一台机器,再使用反序列化技术,从文件中读取数据,还原为对象是最快捷的方式!

hadoop开发了一款轻量级的序列化协议: Wriable机制!

-

-

编写Reducer类

/* * 1. Reducer需要复合Hadoop的Reducer规范 * * 2. KEYIN, VALUEIN: Mapper输出的keyout-valueout * KEYOUT, VALUEOUT: 自定义 */ public class WordcountReducer extends Reducer<Text, IntWritable, Text,IntWritable>{ int sum; IntWritable v = new IntWritable(); // reduce一次处理一组数据,key相同的视为一组 @Override protected void reduce(Text key, Iterable<IntWritable> values,Context context) throws IOException,InterruptedException { // 1 累加求和 sum = 0; for (IntWritable count : values) { sum += count.get(); } // 2 输出 v.set(sum); //将累加的值写出 context.write(key,v); } } -

编写Driver驱动类

public class WordcountDriver { public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException { // 输入输出路径需要根据自己电脑上实际的输入输出路径设置 args = new String[] { "F:/BaiduNetdiskDownload/mrinput/wordcount", "F:/BaiduNetdiskDownload/wordcount"}; //Linux上的地址 //args = new String[] { "/wcinput1", "/wcoutput1"}; // 1 获取配置信息以及封装任务 Configuration configuration = new Configuration(); Job job = Job.getInstance(configuration); // 2 设置jar加载路径 job.setJarByClass(WordcountDriver.class); // 3 设置map和reduce类 job.setMapperClass(WordcountMapper.class); job.setReducerClass(WordcountReducer.class); // 4 设置map输出 job.setMapOutputKeyClass(Text.class); job.setMapOutputValueClass(IntWritable.class); // 5 设置Reduce输出 job.setOutputKeyClass(Text.class); job.setOutputValueClass(IntWritable.class); // 6 设置输入和输出路径 FileInputFormat.setInputPaths(job, new Path(args[0])); FileOutputFormat.setOutputPath(job, new Path(args[1])); // 7 提交 boolean result = job.waitForCompletion(true); System.exit(result ? 0 : 1); } }

-

-

本地测试

直接运行WordcountDriver的main方法.查看结果

-

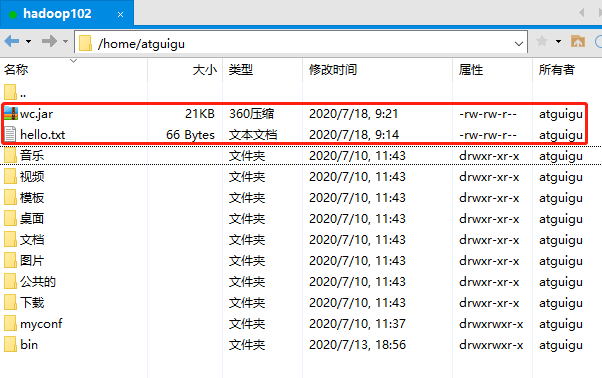

集群上测试

-

将程序打成jar包,然后拷贝到Hadoop集群中,修改jar包名称为wc.jar

-

启动Hadoop集群

-

执行WordCount程序

[atguigu@hadoop102 ~]$ hadoop fs -mkdir /wcinput1 [atguigu@hadoop102 ~]$ hadoop fs -put hello.txt /wcinput1 [atguigu@hadoop102 ~]$ hadoop jar wc.jar com.atguigu.mr.wordcount.WordcountDriver /wcinput1 /wcoutput1

-

Hadoop序列化

序列化概述

常用数据序列化类型

| Java类型 | Hadoop Writable类型 |

|---|---|

| boolean | BooleanWritable |

| byte | ByteWritable |

| int | IntWritable |

| float | FloatWritable |

| long | LongWritable |

| double | DoubleWritable |

| String | Text |

| map | MapWritable |

| array | ArrayWritable |

自定义bean对象实现序列化接口(Writable)

自定义bean对象要想序列化传输,必须实现序列化接口。具体操作步骤如下

-

必须实现Writable接口

-

反序列化时,需要反射调用空参构造函数,所以必须有空参构造

public FlowBean() { super(); } -

重写序列化方法

@Override public void write(DataOutput out) throws IOException { out.writeLong(upFlow); out.writeLong(downFlow); out.writeLong(sumFlow); } -

重写反序列化方法

@Override public void readFields(DataInput in) throws IOException { upFlow = in.readLong(); downFlow = in.readLong(); sumFlow = in.readLong(); } -

注意反序列化的顺序和序列化的顺序完全一致

-

要想把结果显示在文件中,需要重写toString(),可用”/t”分开,方便后续用。

@Override public String toString() { return upFlow + "/t" + downFlow + "/t" + sumFlow; } -

如果需要将自定义的bean放在key中传输,则还需要实现Comparable接口,因为MapReduce框中的Shuffle过程要求对key必须能排序。

@Override public int compareTo(FlowBean o) { // 倒序排列,从大到小 return this.sumFlow > o.getSumFlow() ? -1 : 1; }

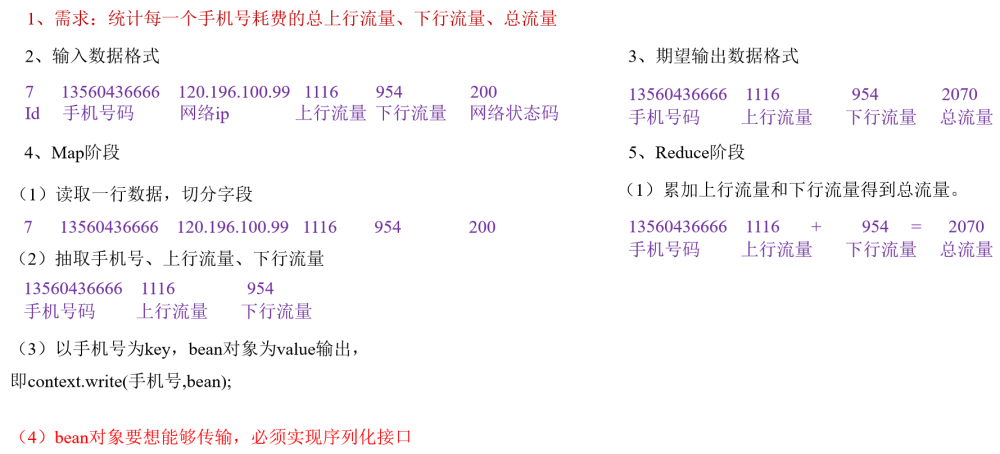

序列化案例实操

-

需求

统计每一个手机号耗费的总上行流量、下行流量、总流量

-

输入数据格式

id 手机号码 网络ip 上行流量 下行流量 网络状态码 7 13560436666 120.196.100.99 1116 954 200 -

输入数据

1 13736230513 192.196.100.1 www.atguigu.com 2481 24681 200 2 13846544121 192.196.100.2 264 0 200 3 13956435636 192.196.100.3 132 1512 200 4 13966251146 192.168.100.1 240 0 404 5 18271575951 192.168.100.2 www.atguigu.com 1527 2106 200 6 84188413 192.168.100.3 www.atguigu.com 4116 1432 200 7 13590439668 192.168.100.4 1116 954 200 8 15910133277 192.168.100.5 www.hao123.com 3156 2936 200 9 13729199489 192.168.100.6 240 0 200 10 13630577991 192.168.100.7 www.shouhu.com 6960 690 200 11 15043685818 192.168.100.8 www.baidu.com 3659 3538 200 12 15959002129 192.168.100.9 www.atguigu.com 1938 180 500 13 13560439638 192.168.100.10 918 4938 200 14 13470253144 192.168.100.11 180 180 200 15 13682846555 192.168.100.12 www.qq.com 1938 2910 200 16 13992314666 192.168.100.13 www.gaga.com 3008 3720 200 17 13509468723 192.168.100.14 www.qinghua.com 7335 110349 404 18 18390173782 192.168.100.15 www.sogou.com 9531 2412 200 19 13975057813 192.168.100.16 www.baidu.com 11058 48243 200 20 13768778790 192.168.100.17 120 120 200 21 13568436656 192.168.100.18 www.alibaba.com 2481 24681 200 22 13568436656 192.168.100.19 1116 954 200

-

-

需求分析

-

编写MapReduce程序

-

编写流量统计的Bean对象

package com.atguigu.mapreduce.flowsum; import java.io.DataInput; import java.io.DataOutput; import java.io.IOException; import org.apache.hadoop.io.Writable; // 1 实现writable接口 public class FlowBean implements Writable{ private long upFlow ; private long downFlow; private long sumFlow; //2 反序列化时,需要反射调用空参构造函数,所以必须有 public FlowBean() { super(); } public FlowBean(long upFlow, long downFlow) { super(); this.upFlow = upFlow; this.downFlow = downFlow; this.sumFlow = upFlow + downFlow; } //3 写序列化方法 @Override public void write(DataOutput out) throws IOException { out.writeLong(upFlow); out.writeLong(downFlow); out.writeLong(sumFlow); } //4 反序列化方法 //5 反序列化方法读顺序必须和写序列化方法的写顺序必须一致 @Override public void readFields(DataInput in) throws IOException { this.upFlow = in.readLong(); this.downFlow = in.readLong(); this.sumFlow = in.readLong(); } // 6 编写toString方法,方便后续打印到文本 @Override public String toString() { return upFlow + "/t" + downFlow + "/t" + sumFlow; } public long getUpFlow() { return upFlow; } public void setUpFlow(long upFlow) { this.upFlow = upFlow; } public long getDownFlow() { return downFlow; } public void setDownFlow(long downFlow) { this.downFlow = downFlow; } public long getSumFlow() { return sumFlow; } public void setSumFlow(long sumFlow) { this.sumFlow = sumFlow; } } -

编写Mapper类

package com.atguigu.mapreduce.flowsum; import java.io.IOException; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Mapper; public class FlowCountMapper extends Mapper<LongWritable, Text, Text, FlowBean>{ FlowBean v = new FlowBean(); Text k = new Text(); @Override protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { // 1 获取一行 String line = value.toString(); // 2 切割字段 String[] fields = line.split("/t"); // 3 封装对象 // 取出手机号码 String phoneNum = fields[1]; // 取出上行流量和下行流量 long upFlow = Long.parseLong(fields[fields.length - 3]); long downFlow = Long.parseLong(fields[fields.length - 2]); k.set(phoneNum); v.set(downFlow, upFlow); // 4 写出 context.write(k, v); } } -

编写Reducer类

package com.atguigu.mapreduce.flowsum; import java.io.IOException; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Reducer; public class FlowCountReducer extends Reducer<Text, FlowBean, Text, FlowBean>{ private FlowBean out_value=new FlowBean(); @Override protected void reduce(Text key, Iterable<FlowBean> values, Context context) throws IOException, InterruptedException { long sumUpFlow=0; long sumDownFlow=0; // 1 遍历所用bean,将其中的上行流量,下行流量分别累加 for (FlowBean flowBean : values) { sumUpFlow+=flowBean.getUpFlow(); sumDownFlow+=flowBean.getDownFlow(); } // 2 封装对象 out_value.setUpFlow(sumUpFlow); out_value.setDownFlow(sumDownFlow); out_value.setSumFlow(sumDownFlow+sumUpFlow); // 3 写出 context.write(key, out_value); } } -

编写Driver驱动类

package com.atguigu.mapreduce.flowsum; import java.io.IOException; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; public class FlowsumDriver { public static void main(String[] args) throws IllegalArgumentException, IOException, ClassNotFoundException, InterruptedException { // 输入输出路径需要根据自己电脑上实际的输入输出路径设置 args = new String[] {"F:/BaiduNetdiskDownload/mrinput/flowbean", "F:/BaiduNetdiskDownload/flowbean"}; //保证输出目录不存在 FileSystem fs=FileSystem.get(conf); if (fs.exists(outputPath)) { fs.delete(outputPath, true); } // 1 获取配置信息,或者job对象实例 Configuration configuration = new Configuration(); Job job = Job.getInstance(configuration); // 6 指定本程序的jar包所在的本地路径 job.setJarByClass(FlowsumDriver.class); // 2 指定本业务job要使用的mapper/Reducer业务类 job.setMapperClass(FlowCountMapper.class); job.setReducerClass(FlowCountReducer.class); // 3 指定mapper输出数据的kv类型 job.setMapOutputKeyClass(Text.class); job.setMapOutputValueClass(FlowBean.class); // 4 指定最终输出的数据的kv类型 job.setOutputKeyClass(Text.class); job.setOutputValueClass(FlowBean.class); // 5 指定job的输入原始文件所在目录 FileInputFormat.setInputPaths(job, new Path(args[0])); FileOutputFormat.setOutputPath(job, new Path(args[1])); // 7 将job中配置的相关参数,以及job所用的java类所在的jar包, 提交给yarn去运行 boolean result = job.waitForCompletion(true); System.exit(result ? 0 : 1); } }

-

由于篇幅过长,[ MapReduce框架原理 ]等以后的内容,请看下回分解!

- 本文标签: Hadoop tar 数据 遍历 dependencies core bean linux 测试 UI HTML 参数 src Logging 协议 https 配置 App list lib Job tk git map 需求 value client Word junit root ip apr SDN key 同步 博客 id apache pom HDFS API XML Java类 http IO java maven 集群 大数据 实例 总结 ask mapper spring tab 进程 IDE parse 开发 统计 ORM 目录

- 版权声明: 本文为互联网转载文章,出处已在文章中说明(部分除外)。如果侵权,请联系本站长删除,谢谢。

- 本文海报: 生成海报一 生成海报二

![[HBLOG]公众号](https://www.liuhaihua.cn/img/qrcode_gzh.jpg)